AI Benchmarks & Leaderboard — 2026-04-24

This week's AI leaderboard sees GPT-5.5 claiming the top Intelligence Index spot with a score of 60, narrowly ahead of Claude Opus 4.7 and Gemini 3.1 Pro Preview tied at 57, according to Artificial Analysis live rankings. Open-source models are closing the gap rapidly, with GLM-5.1 leading SWE-Bench Pro at 58.4% and Kimi K2.6 entering the top 5 closed-source rankings. A notable Forbes analysis published April 19 argues that open-source AI has moved decisively from a sideshow to a core enterprise strategy.

AI Benchmarks & Leaderboard — 2026-04-24

New Model Releases & Updates

GPT-5.5 by OpenAI

- Type: Closed-source

- Key benchmarks: Intelligence Index score of 60 (xhigh setting), 59 (high setting) — highest on Artificial Analysis leaderboard

- vs. Previous best: Surpasses GPT-5.4 (score: 57) and Claude Opus 4.7 (score: 57), previously tied for first

- What's notable: The xhigh compute setting pushes it clearly ahead of all rivals on Artificial Analysis's composite Intelligence Index; GPT-5.3 Codex (xhigh) also appears in the top 5 with a score of 54, indicating OpenAI is occupying multiple top spots simultaneously

Kimi K2.6 by Moonshot AI

- Type: Closed-source

- Key benchmarks: Intelligence Index score of 54, placing it 4th on the Artificial Analysis leaderboard

- vs. Previous best: Enters the top 5, matching GPT-5.3 Codex (xhigh)

- What's notable: Represents continued strong performance from Chinese AI labs in frontier model rankings; sits just below the OpenAI/Anthropic/Google top tier

GLM-5.1 by Zhipu AI

- Type: Open-source

- Key benchmarks: SWE-Bench Pro score of 58.4%, leading all open-source models on that coding benchmark

- vs. Previous best: Tops the open-source coding leaderboard per April 2026 tracking

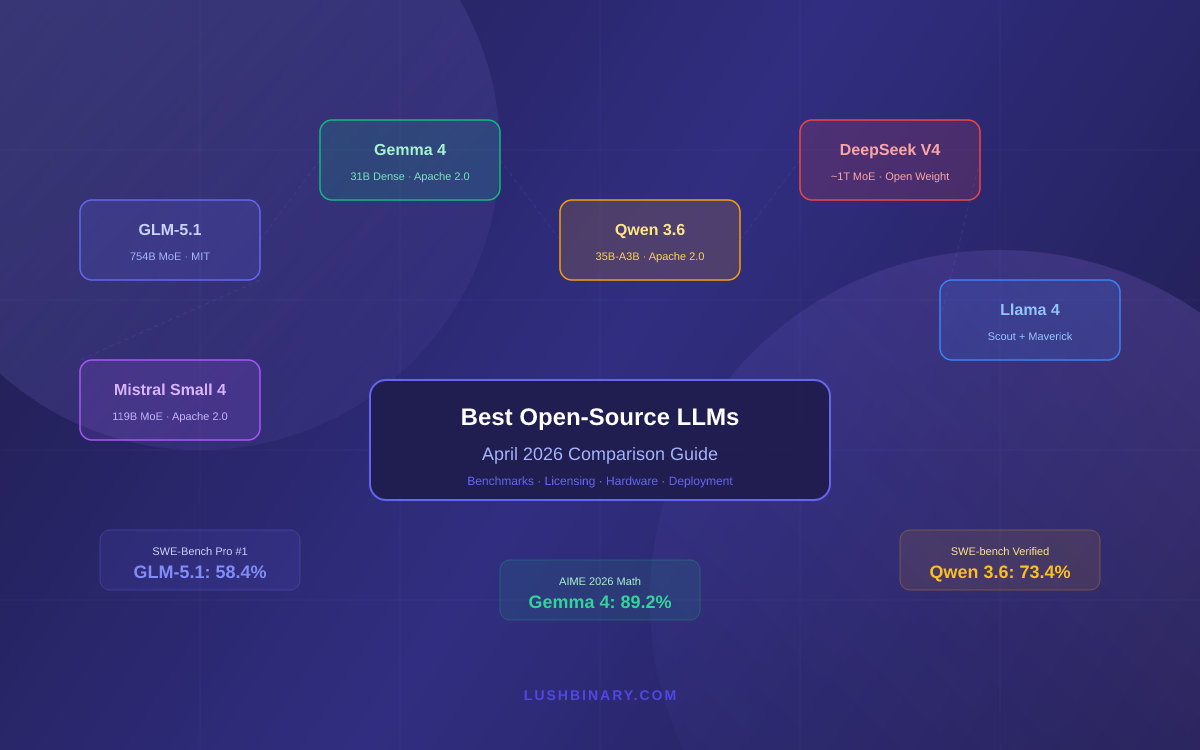

- What's notable: Zhipu's GLM family continues to lead open-source coding benchmarks; also compared favorably against Gemma 4, Qwen 3.6, Llama 4, and DeepSeek V4 in April rankings

Leaderboard Snapshot

Frontier Models (Closed-Source)

| Model | Provider | Notable Strengths | Key Score |

|---|---|---|---|

| GPT-5.5 (xhigh) | OpenAI | Top Intelligence Index; best overall composite | 60 |

| GPT-5.5 (high) | OpenAI | Strong composite, slightly lower compute | 59 |

| Claude Opus 4.7 (Adaptive Reasoning, Max Effort) | Anthropic | Reasoning-heavy tasks | 57 |

| Gemini 3.1 Pro Preview | Competitive across domains | 57 | |

| GPT-5.4 (xhigh) | OpenAI | Previous top model | 57 |

| Kimi K2.6 | Moonshot AI | Strong challenger from Chinese AI lab | 54 |

| GPT-5.3 Codex (xhigh) | OpenAI | Coding-specialized frontier model | 54 |

Open-Source Leaders

| Model | Parameters | Notable Strengths | Key Score |

|---|---|---|---|

| GLM-5.1 | Not disclosed | SWE-Bench Pro leader for coding | 58.4% SWE-Bench Pro |

| Qwen 3.5 0.8B (Reasoning) | 0.8B | Most affordable: $0.02/1M tokens; fastest small reasoning model | Most affordable |

| Gemma 3n E4B Instruct | ~4B effective | Efficiency-optimized; $0.03/1M tokens | Near-top value |

| Mercury 2 | Not disclosed | Fastest model at 716.5 tokens/sec | 716.5 t/s |

| Granite 4.0 H Small | Not disclosed | Second fastest at 453.0 tokens/sec | 453.0 t/s |

| Granite 3.3 8B (Non-reasoning) | 8B | Third fastest at 379.5 tokens/sec | 379.5 t/s |

| Kimi K2.5 | Not disclosed | Top open-source coding performance | Leading coding |

Benchmark Deep Dive

Open-Source AI's Strategic Moment: Closing the Gap on Closed Models

A Forbes analysis published April 19, 2026 argues that the question is no longer whether open-source AI matters, but whether companies can still afford to treat it as secondary. This framing resonates with the hard benchmark data emerging this week.

The April 2026 open-source rankings tell a striking story. GLM-5.1 now scores 58.4% on SWE-Bench Pro — a demanding software engineering evaluation designed to resist saturation — putting it ahead of Gemma 4, Qwen 3.6, Llama 4, and DeepSeek V4. For context, SWE-Bench Pro tasks models with resolving real GitHub issues, making it a meaningful proxy for production coding utility rather than synthetic test performance.

Speed metrics add another dimension. Mercury 2 clocks 716.5 tokens per second on the Artificial Analysis leaderboard, followed by Granite 4.0 H Small at 453.0 t/s and Granite 3.3 8B at 379.5 t/s. These are open-weight models matching or exceeding the inference speed of many closed APIs. Meanwhile, Qwen 3.5 0.8B holds the cost crown at $0.02 per million tokens — a price point that makes experimentation effectively free for most organizations.

For practitioners, the practical implication is clear: the open-source tier has matured to the point where, for coding, speed-sensitive, or cost-constrained workloads, closed frontier models are no longer the default answer. The intelligence gap at the very top (GPT-5.5 at 60 vs. GLM-5.1's open-source leadership) remains real, but the functional gap for everyday deployment has narrowed substantially.

Analysis & Trends

-

State of the art: GPT-5.5 leads the closed-source composite Intelligence Index (score: 60). For coding, GLM-5.1 leads open-source SWE-Bench Pro at 58.4%. For speed, Mercury 2 (716.5 t/s) dominates inference throughput across all model categories.

-

Open vs. Closed gap: The gap at the absolute frontier persists — GPT-5.5 at 60 is meaningfully ahead of the best open-source models in composite reasoning. However, in specific verticals like coding (GLM-5.1) and cost-efficiency (Qwen 3.5 0.8B at $0.02/M tokens), open-source options are now enterprise-grade alternatives. The Forbes analysis from April 19 formalizes what practitioners have been observing for months.

-

Cost-performance: Qwen 3.5 0.8B holds the most affordable position at $0.02 per million tokens (blended), followed by Gemma 3n E4B Instruct at $0.03. These prices represent a continued commoditization of capable small models.

-

Emerging patterns: Speed differentiation is becoming a new competitive axis — Mercury 2 at 716.5 t/s is nearly 60% faster than the third-place Granite 3.3 8B (379.5 t/s). Chinese AI labs (Moonshot's Kimi K2.6 at #4 closed-source, Zhipu's GLM-5.1 leading open-source coding) continue to punch well above their weight in frontier rankings.

What to Watch Next

-

GPT-5.5 vs. Claude Opus 4.7 head-to-head on domain-specific benchmarks: The composite Intelligence Index has GPT-5.5 ahead, but Claude Opus 4.7 with Adaptive Reasoning may close or reverse that gap on reasoning-heavy or long-context tasks. Watch for third-party evaluations breaking down performance by task type.

-

GLM-5.1 SWE-Bench Pro trajectory: If GLM-5.1 continues improving on coding benchmarks, it could become the de facto open-source default for software engineering agents — a category with significant commercial implications.

-

Enterprise open-source adoption signals: With Forbes now explicitly framing open-source AI as a strategic imperative rather than a niche choice, watch for enterprise procurement and deployment data that tests whether this thesis holds in practice — particularly as IBM's Granite 4.0 family shows strong speed numbers that could appeal to on-premises deployments.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.