AI Benchmarks & Leaderboard — 2026-04-21

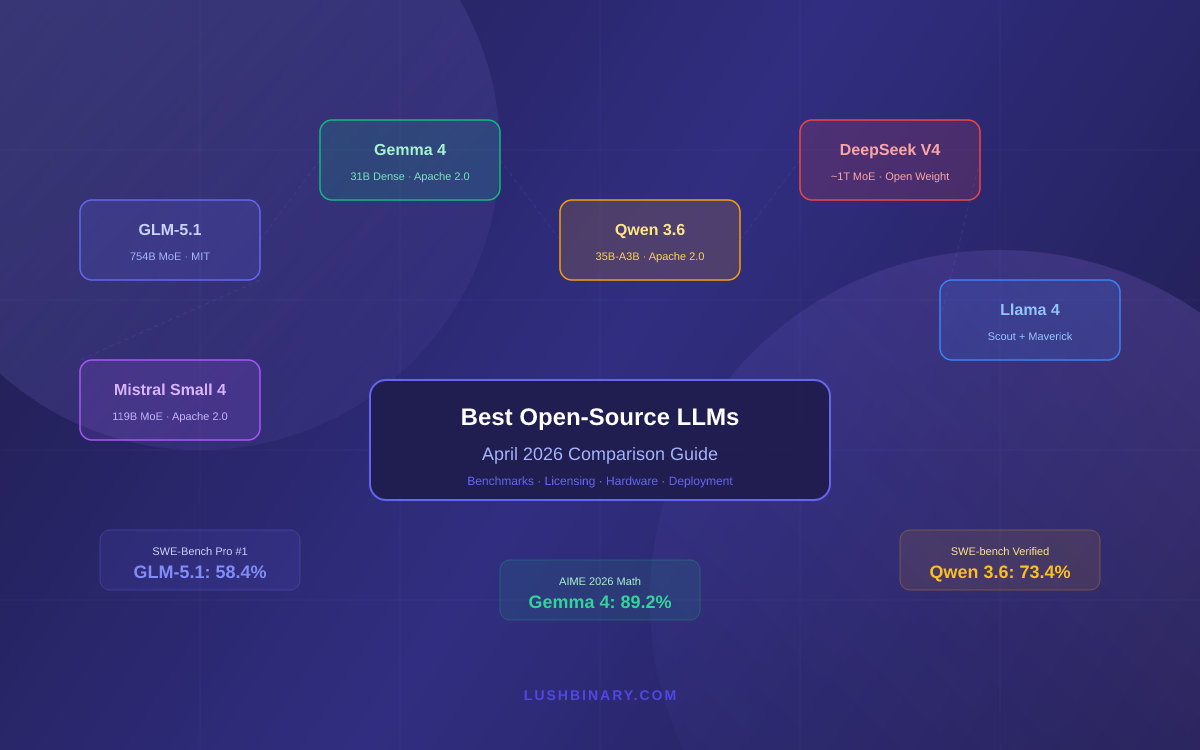

This week's AI landscape is defined by a flurry of frontier model releases, with Claude Opus 4.7, Gemini 3.1 Pro, and GPT-5.4 trading blows at the top of intelligence leaderboards. Open-source contenders — particularly GLM-5.1, Qwen 3.6, and Gemma 4 — are closing the gap on proprietary giants, with GLM-5.1 leading SWE-Bench Pro at 58.4%. Speed benchmarks tell a separate story, with Mercury 2 reaching 658.8 tokens/second.

AI Benchmarks & Leaderboard — 2026-04-21

New Model Releases & Updates

Claude Opus 4.7 (max) by Anthropic

- Type: Closed-source, frontier

- Key benchmarks: Ranked as one of the two highest-intelligence models on Artificial Analysis leaderboard (alongside Gemini 3.1 Pro Preview)

- vs. Previous best: Surpasses earlier Claude Opus versions; now tied at the top with Gemini 3.1 Pro Preview for overall intelligence score

- What's notable: The "(max)" configuration targets maximum reasoning depth; appears on the GDPval-AA leaderboard alongside GPT-5.4 and other frontier models

Gemini 3.1 Pro Preview by Google

- Type: Closed-source, frontier

- Key benchmarks: Co-leads the Artificial Analysis intelligence leaderboard with Claude Opus 4.7 (max)

- vs. Previous best: Advances over Gemini 3 Pro; preview status suggests further refinement expected

- What's notable: Also listed in fast-inference configurations; Gemini 2.5 Flash-Lite remains one of the fastest models at 349.6 t/s

GLM-5.1 by Zhipu AI

- Type: Open-source

- Key benchmarks: SWE-Bench Pro score of 58.4%, leading all open-source models on that coding benchmark

- vs. Previous best: Beats Gemma 4, Qwen 3.6, Llama 4, and DeepSeek V4 on SWE-Bench Pro

- What's notable: Tops the April 2026 open-source LLM ranking for coding; fully open weights with permissive licensing

Mercury 2 by Inception AI

- Type: Closed-source

- Key benchmarks: Fastest model at 658.8 tokens/second on Artificial Analysis speed benchmarks

- vs. Previous best: Granite 4.0 H Small follows at 410.6 t/s; Mercury 2 leads by a wide margin

- What's notable: Purpose-built for high-throughput inference; speed advantage is significant for production use cases requiring real-time responsiveness

Gemma 4 by Google (Open-Source)

- Type: Open-source

- Key benchmarks: Among top open-source models in April 2026 rankings across reasoning and coding tasks

- vs. Previous best: Represents a significant step up from Gemma 3 family; competitive with Qwen 3.6 and Llama 4

- What's notable: Available under open weights; strong local inference performance documented

Leaderboard Snapshot

Frontier Models (Closed-Source)

| Model | Provider | Notable Strengths | Key Score |

|---|---|---|---|

| Claude Opus 4.7 (max) | Anthropic | Highest intelligence (tied #1) | Top intelligence tier |

| Gemini 3.1 Pro Preview | Highest intelligence (tied #1) | Top intelligence tier | |

| GPT-5.4 (xhigh) | OpenAI | High intelligence, strong coding | High intelligence tier |

| GPT-5.3 Codex (xhigh) | OpenAI | Coding-focused, high intelligence | High intelligence tier |

| Mercury 2 | Inception AI | Fastest inference at 658.8 t/s | 658.8 tokens/sec |

| Gemini 2.5 Flash-Lite | Speed + efficiency | 349.6 tokens/sec |

Open-Source Leaders

| Model | Parameters | Notable Strengths | Key Score |

|---|---|---|---|

| GLM-5.1 | Not disclosed | SWE-Bench Pro leader | 58.4% SWE-Bench Pro |

| Qwen 3.6 | ~397B (MoE) | Strong reasoning, runs locally | Top open-source tier |

| Gemma 4 | Not disclosed | Balanced reasoning + coding | Top open-source tier |

| Llama 4 | Not disclosed (MoE) | Multi-modal, broad capability | Top open-source tier |

| DeepSeek V4 | Not disclosed | Coding + reasoning | Top open-source tier |

| Granite 4.0 H Small | Not disclosed | Fastest open inference at 410.6 t/s | 410.6 tokens/sec |

Benchmark Deep Dive

GLM-5.1 and the SWE-Bench Pro Surge

The most consequential benchmark story this week is GLM-5.1's 58.4% score on SWE-Bench Pro — the more rigorous successor to the original SWE-Bench software engineering evaluation. SWE-Bench Pro tests a model's ability to resolve real GitHub issues, requiring genuine code understanding, patch generation, and test-passing rather than stylistic code completion.

What makes 58.4% remarkable is context: earlier this year, clearing 40% on the original SWE-Bench was considered competitive; the "Pro" variant is substantially harder, meaning GLM-5.1's score represents genuine frontier-level software engineering capability from an open-weight model. Zhipu AI's achievement narrows what was previously a wide moat enjoyed by closed-source models like GPT-5.4 and Claude Opus 4.x on agentic coding tasks.

For practitioners, this matters enormously. SWE-Bench Pro scores correlate with real-world utility in autonomous coding pipelines — bug fixing, PR review agents, and CI/CD automation. An open-weight model at this level can be fine-tuned, hosted privately, and deployed without API cost concerns, which changes the economics of AI-assisted software development teams.

The broader pattern is a compression of the performance gap: Gemma 4, Qwen 3.6, and Llama 4 also rank competitively, suggesting that the open-source ecosystem has reached an inflection point where multiple models can credibly handle production software engineering tasks.

Analysis & Trends

-

State of the art: Claude Opus 4.7 (max) and Gemini 3.1 Pro Preview share the intelligence crown per Artificial Analysis; GPT-5.4 and GPT-5.3 Codex lead coding-specific tasks among closed models; Mercury 2 dominates speed rankings at 658.8 t/s.

-

Open vs. Closed gap: The gap is narrowing meaningfully. GLM-5.1 at 58.4% on SWE-Bench Pro demonstrates open-weight models are now competitive with frontier closed models on hard software engineering benchmarks. Qwen 3.6's reported ability to run a 397B MoE model locally at 5.5+ tokens/sec on consumer hardware signals that size is no longer a barrier to local deployment.

-

Cost-performance: Mercury 2 (658.8 t/s) and Granite 4.0 H Small (410.6 t/s) lead speed efficiency, while Qwen3.5 0.8B (Reasoning) is reported as the most affordable at $0.02 per 1M tokens — indicating growing maturity in the efficiency tier below frontier.

-

Emerging patterns: The "reasoning" variant pattern is proliferating — Gemini 2.5 Flash-Lite (Reasoning), Qwen3.5 0.8B (Reasoning), and DeepSeek V3.2 (Reasoning) all appear in leaderboards as distinct model configurations. This suggests reasoning toggles and hybrid inference modes are becoming standard product features rather than special-case offerings.

What to Watch Next

-

GLM-5.1 broader benchmarks: SWE-Bench Pro is one signal; watch for GPQA and MATH evaluations of GLM-5.1 to confirm whether its coding strength generalizes to scientific reasoning — which would cement it as a true frontier open-source model.

-

Gemini 3.1 Pro full release: The current "Preview" label on Gemini 3.1 Pro suggests Google intends a broader launch. The transition from preview to GA typically brings pricing announcements, context window details, and updated benchmark cards that could shift leaderboard positions.

-

ARC-AGI-2 results for new models: Multiple models released this cycle (Claude Opus 4.7, GLM-5.1, Gemma 4) have not yet been widely benchmarked on ARC-AGI-2 — the hardest publicly available reasoning evaluation. Results on this benchmark would provide a clearer picture of genuine abstract reasoning capability across the new generation of frontier and open-source models.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.