AI Coding Assistants — 2026-04-26

Platform engineering teams are wrestling with a new governance challenge: who actually "owns" Claude Code, Cursor, and OpenAI Codex across the engineering org? A detailed guide published April 24 by Portkey lays out how platform teams should approach managing coding agents at scale — the dominant conversation in enterprise developer circles right now. Meanwhile, GitHub Copilot's supported-models documentation was updated within the past 6 hours, signaling continued rapid model churn at Microsoft's AI coding flagship.

AI Coding Assistants — 2026-04-26

Today's Lead Story

Platform Teams Scrambling to Govern Claude Code, Cursor, and Codex at Scale

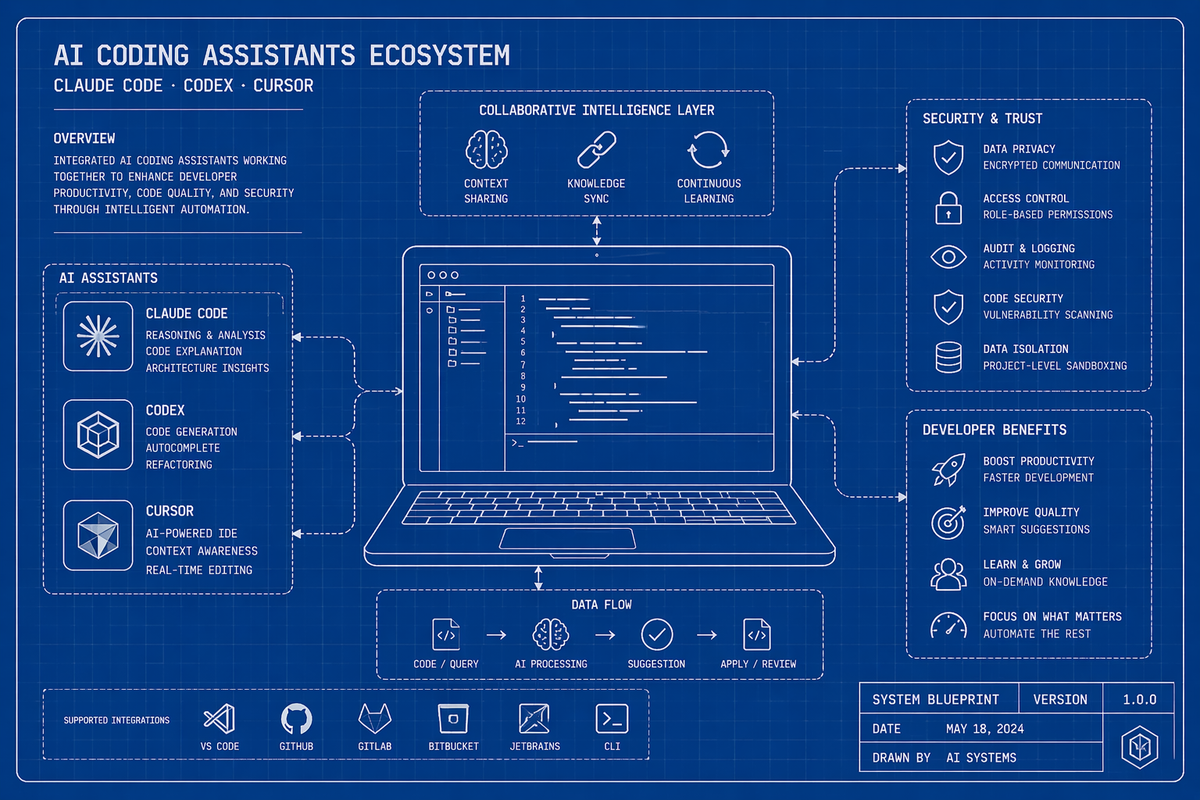

- What happened: Portkey published an in-depth guide on April 24 titled "Who owns Claude Code at your company? A platform team's guide to managing coding agents at scale," arguing that as Claude Code, Cursor, and OpenAI Codex proliferate across engineering organizations, context management and governance — not the tools themselves — are becoming the key differentiator between high- and low-performing teams.

- Who it affects: Platform engineers, engineering managers, and CTOs at companies where multiple AI coding assistants are deployed across different teams or individuals, particularly those managing cost, security, and context-sharing at scale.

- Why it matters: The "tool harness is converging," per the guide, meaning the assistants themselves are growing more similar in capability. What separates teams is how well they manage context — shared knowledge, repo conventions, and configuration — across agents. This reframes the AI coding ROI conversation from "which tool?" to "how do we govern and contextualize all of them?" — a major shift in how enterprise buyers will evaluate these products.

Release & Changelog Radar

-

GitHub Copilot — Supported Models Update (today, within 6 hours): GitHub's official Copilot supported-models documentation page was updated as of this morning, reflecting ongoing additions and changes to the model roster available inside Copilot. Developers should check the page directly to see which new models are now available for chat, completions, and agentic tasks — the model selection inside Copilot has been expanding rapidly. — Practical impact: if you've been defaulting to one model inside Copilot, now is a good time to audit what's available and test alternatives on your workload.

-

Best AI Coding Tools Roundup — April 2026 Edition (1 day ago): A freshly published survey from Bit Guardian's engineering blog covers Augment Code, Cursor, GitHub Copilot, Claude Code, and Replit as the recommended tools heading into late April 2026, with emphasis on shipping speed and productivity benchmarks. — Practical impact: a useful current-state snapshot for teams evaluating their stack, though individual benchmarks should be verified against your own workload.

- Portkey Platform Guide — April 24, 2026: Beyond the governance angle covered in the lead story, the Portkey guide includes concrete recommendations for platform teams on how to standardize context files (e.g., CLAUDE.md, AGENTS.md, and Copilot instruction files) across the org, and how to route requests across different agents depending on task type. — Practical impact: organizations running more than one coding assistant now have a structured playbook for cross-agent context management.

Benchmark & Performance Watch

No new benchmark results published within the past 24 hours were surfaced in today's research. The following reflects the most recently known standings:

-

SWE-bench Verified (current leaderboard): The competitive landscape on SWE-bench has been shifting rapidly in 2026, with top-tier agents from Anthropic (Claude Code), OpenAI (Codex/GPT-based agents), and Cursor's Composer 2 all trading positions. The authoritative compendium of 50+ agent benchmarks maintained at github.com/philschmid/ai-agent-benchmark-compendium covers SWE-bench, Terminal-Bench 2.0, LiveCodeBench Pro, and FrontierMath categories. No score update was confirmed within the 24-hour window — check the live leaderboard for current numbers.

-

Institute of Coding Agents — March 2026 Benchmark Report: The most recent structured benchmark report from the Institute of Coding Agents (dated March 19, 2026) introduces Terminal-Bench 2.0 (89 real-world agentic terminal problems, part of Artificial Analysis Intelligence Index v4.0) and LiveCodeBench Pro, a harder competitive programming variant with an Elo-based leaderboard. These are now the de facto hard-mode benchmarks for agentic coding evaluation.

Developer Sentiment Pulse

-

Portkey guide community signal: The framing in Portkey's April 24 guide — "the harness is converging, context is what separates teams" — is generating discussion among platform engineers who are tired of the "which tool is best" debate and are now focused on org-level tooling strategy. Reveals that enterprise teams are moving past tool selection and into operational maturity.

-

Bit Guardian engineering blog: A fresh "best tools" post published April 25 specifically calls out Augment Code alongside the usual Cursor/Copilot/Claude Code trio as a recommended tool for shipping code faster — notable because Augment Code rarely makes these lists alongside the category leaders. Reveals that the mid-tier of coding assistants is gaining enough credibility to appear in mainstream recommendation lists.

-

GitHub Copilot docs churn: The fact that Copilot's supported-models page was touched within the last 6 hours on a weekend reflects the breakneck pace of model additions at GitHub/Microsoft. Developers active in Copilot forums have noted that model availability can change week-to-week without prominent announcements — creating confusion about which model to pin for production workflows. Reveals friction between rapid iteration by vendors and the need for stability in developer toolchains.

Deep Dive: Governing AI Coding Agents at Enterprise Scale

The Portkey guide published April 24 makes a provocative but well-supported argument: the coding assistant wars are essentially over at the tool layer. Claude Code, Cursor, and OpenAI Codex are all competent enough that the marginal capability difference between them is shrinking. The real battleground is context — how much relevant information each agent has about your codebase, conventions, team standards, and task history.

The guide's prescription for platform teams centers on three operational pillars. First, standardize context files: CLAUDE.md, AGENTS.md, and Copilot instruction files should be treated as first-class engineering artifacts, version-controlled and reviewed like code. Second, own the routing layer: platform teams should decide which agent handles which class of task (e.g., Claude Code for large refactors, Copilot for inline completions) rather than leaving it to individual developer preference. Third, instrument everything: agent invocations should be logged, costed, and tied back to business outcomes — not treated as unmetered developer utilities.

The second-order implication is significant for vendors: if platform teams commoditize the tool layer and compete on context infrastructure, pricing pressure on per-seat licensing will intensify. The teams that build the best internal context tooling may find they can swap underlying models freely — which is exactly what vendors like Anthropic and OpenAI are trying to prevent through deep IDE integrations and proprietary memory features.

Business & Funding Moves

No funding rounds, acquisitions, or pricing changes were confirmed within the 24-hour window ending April 26. The following are the most recent known figures for context:

-

Cursor (Anysphere): Raised $2.3B in November 2025, five months after its previous round. Reached approximately $300M ARR in early 2025 and was reported in a $9B valuation range. No new funding news confirmed in the past 24 hours — but watch for announcements given the company's aggressive growth trajectory.

-

Emergent (India): TechCrunch reported April 15 that Indian vibe-coding startup Emergent launched "Wingman," an AI agent that manages and automates tasks through WhatsApp and Telegram. While not a direct coding-assistant play, it signals that the agentic coding pattern is spreading globally and into messaging-native interfaces — worth watching as a distribution vector for developer tooling in emerging markets.

What to Watch Next

- GitHub Copilot model roster: With docs updated this morning, a formal blog announcement about new supported models — potentially including a new Anthropic or Google model inside Copilot — could drop any day. Watch the GitHub blog and Copilot changelog for a formal post.

- SWE-bench Verified leaderboard movement: With Claude Code, Codex, and Cursor Composer 2 all actively competing, a new benchmark drop or agent update from any of the three could reshuffle the top of the leaderboard in the next 48–72 hours. Monitor the Artificial Analysis Intelligence Index v4.0 and livecodebenchpro.com for real-time Elo shifts.

- Enterprise context-management tooling: The Portkey guide signals a nascent market for cross-agent context infrastructure. Watch for announcements from Portkey, LangChain, and similar middleware players about products that help platform teams manage CLAUDE.md/AGENTS.md at org scale — this could be the next hot category in the developer tooling market.

Reader Action Items

-

Audit your Copilot model selection today: GitHub updated Copilot's supported-models page within the last 6 hours. Open your Copilot settings and check whether new models are available for your workflow — there may be a faster or more capable option you haven't tested yet.

-

Start a CLAUDE.md or AGENTS.md for your repo: If you use Claude Code or any agent that reads a root-level context file, take 30 minutes today to write a minimal CLAUDE.md covering your repo's conventions, preferred libraries, and test patterns. The Portkey guide argues this is the single highest-leverage action a developer can take to improve agent output quality.

-

Run the same task on two different agents and compare: Pick a real task from your backlog — a refactor, a test suite, or a small feature — and run it on both your primary coding assistant and one you haven't tried recently (e.g., if you're a Cursor user, try Claude Code directly, or vice versa). With the tool layer converging, the results may surprise you and inform your team's context and routing strategy.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.