AI Coding Assistants — 2026-05-04

The dominant signal this week is the AI coding assistant market consolidation story: Cursor has reached $2B ARR while GitHub Copilot holds 4.7M paid users, and Claude Code sits at 46% developer satisfaction — figures that frame the fierce three-way competition reshaping how developers write code. Community conversation is centered on head-to-head comparisons between Claude Code, Cursor, and Copilot as developers debate which tool best fits their workflow in 2026. A fresh comparison piece from K21 Academy (published two days ago) has re-energized the debate.

AI Coding Assistants — 2026-05-04

Today's Lead Story

Market Share Snapshot: Cursor at $2B ARR, Copilot at 4.7M Paid Users, Claude Code at 46% Satisfaction

- What happened: A freshly published market analysis from ideaplan.io (dated within the past 5 days) aggregated the most current public figures across the three dominant AI coding assistants: Cursor has reached $2B ARR, GitHub Copilot now counts 4.7M paid subscribers, and Claude Code reports a 46% developer satisfaction rating — the lowest among the three leaders.

- Who it affects: Enterprise buyers, individual developers, and teams currently evaluating or migrating between AI coding platforms.

- Why it matters: These numbers crystallize a three-way race at very different price-points and distribution models. Cursor's ARR growth signals strong willingness-to-pay among power users; Copilot's subscriber count reflects GitHub's distribution advantage; Claude Code's satisfaction gap suggests Anthropic still has product-experience work to do despite strong underlying model quality.

Release & Changelog Radar

- GitHub Copilot — Supported Models Update (past 4 days): GitHub's official docs page for supported AI models in Copilot was updated within the past four days, indicating a model roster change or documentation refresh. Developers using Copilot should re-check which underlying models are available for chat vs. code completion in their plan tier, as model availability varies by subscription level.

- Cursor — "Automations" Agentic System (past ~2 months, most notable recent feature): Cursor rolled out "Automations," a system that lets users automatically launch agents within their coding environment triggered by a new addition to the codebase, a Slack message, or a simple timer. This moves Cursor meaningfully beyond autocomplete into ambient, event-driven coding automation.

- Claude Code vs. GitHub Copilot vs. Cursor — Fresh Comparison Guide (2 days ago): K21 Academy published an updated, detailed comparison of Claude Code, Copilot, and Cursor specifically framed around which tool developers should prioritize for career growth and productivity in 2026. The piece covers agent autonomy, context handling, and pricing across all three.

Benchmark & Performance Watch

-

SWE-bench & Coding Agent Benchmarks — Current Landscape: The

ai-agent-benchmarkcompendium on GitHub (updated January 2026) tracks 80+ coding agents against SWE-Bench and pricing metrics. Devin, Cursor, Claude Code, and Copilot are all represented. No single new top score dropped in the past 24 hours, but the compendium remains the most current aggregated reference for SWE-Bench standings heading into May 2026. Devin continues to hold leading positions on autonomous software engineering tasks. -

SWE-rebench & Contamination Transparency: A March 2026 benchmarks report from the Institute of Coding Agents highlights SWE-rebench (ICLR 2026) — an automated pipeline generating 21,000+ fresh Python SWE tasks from GitHub continuously — as the most transparent contamination-aware benchmark available. It color-codes contamination risk per submission, a feature lacking in most vendor-reported scores. Developers evaluating Claude Code, Cursor, or Copilot claims should weight SWE-rebench results more heavily than self-reported vendor benchmarks.

Developer Sentiment Pulse

-

K21 Academy readers (2 days ago): The Claude Code vs. Copilot vs. Cursor piece published this week is drawing active community engagement around the question of which tool is best for developers trying to advance their careers — revealing that many developers are now choosing coding assistants not just for productivity but as a skills-signaling decision for the job market.

-

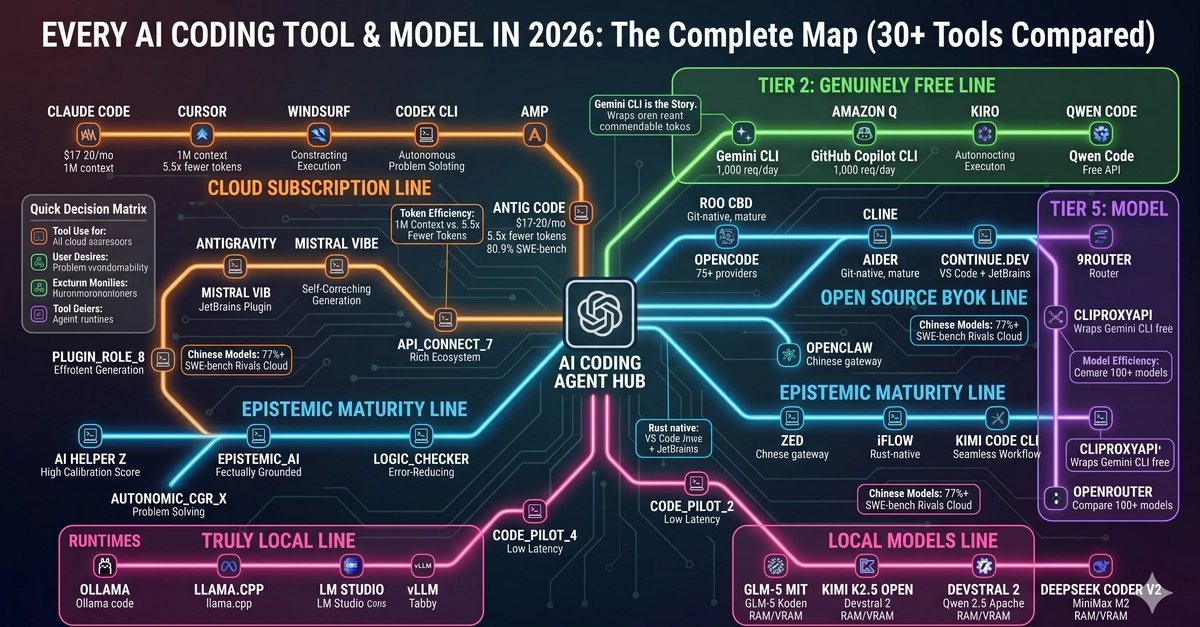

Dev.to community: A viral post titled "Every AI Coding CLI in 2026: The Complete Map (30+ Tools Compared)" (published ~3 weeks ago, still circulating heavily) reflects ongoing developer frustration that the landscape is simply too fragmented to evaluate. Developers are asking for clearer decision frameworks rather than more tools. The fact that 30+ CLI tools now exist signals both explosive innovation and evaluation fatigue.

- MindStudio blog readers: The Windsurf vs. Cursor vs. Claude Code three-way comparison (published ~3 weeks ago, still drawing traffic) consistently surfaces one friction point: developers find Claude Code strongest on underlying model quality but weakest on IDE integration smoothness compared to Cursor and Windsurf. Praise for Claude's reasoning, frustration with its workflow friction, is the dominant sentiment pattern.

Deep Dive: The Three-Way Race — Cursor, Copilot, and Claude Code in 2026

The AI coding assistant market has effectively consolidated around three dominant players with radically different go-to-market strategies, and the latest public data makes the competitive dynamics unusually legible.

Cursor ($2B ARR) is winning on willingness-to-pay among power users. Its Automations feature — event-triggered agents that activate on Slack messages, codebase changes, or timers — represents the most aggressive push toward ambient coding among the IDE-native tools. Cursor's strategy is to make the IDE itself agentic, not just the chat window.

GitHub Copilot (4.7M paid users) is winning on distribution. Microsoft's integration of Copilot across GitHub, VS Code, and enterprise Azure contracts creates a moat that pure-play startups can't easily replicate. Its recent supported-models update signals continued investment in model variety, letting enterprise buyers swap underlying LLMs without changing their tooling.

Claude Code (46% satisfaction) faces the hardest narrative challenge: Anthropic's models are widely regarded as best-in-class for reasoning and code quality, yet the 46% satisfaction figure — the lowest among the three leaders — suggests a product-experience gap. Developers praise Claude's outputs but struggle with integration friction and workflow fit compared to the more polished IDE experiences of Cursor and Copilot.

The implication for developer teams: model quality alone no longer wins. Workflow integration, pricing predictability, and agentic automation depth are now the real differentiators.

Business & Funding Moves

-

Cursor (Anysphere): Has reached $2B ARR as of current reporting — a remarkable milestone for a company that raised $2.3B in a November 2025 round. The ARR figure confirms that revenue is tracking toward justifying its valuation, a rare outcome in the current funding environment. The capital is being deployed toward Composer model development and the new Automations agentic system.

-

Codeium (Windsurf): Was in talks to raise at a ~$3B valuation as of early 2025, making it the closest pure-play challenger to Cursor on the funding dimension. Current ARR and round status are not confirmed in this reporting period, but the valuation context matters for any team evaluating Windsurf's long-term viability as a vendor.

What to Watch Next

-

Claude Code satisfaction recovery: With a 46% satisfaction score, Anthropic is the most likely of the three leaders to ship a significant product-experience update. Watch for announcements around IDE plugin improvements, MCP integrations, or workflow smoothing that could close the gap with Cursor and Copilot — any such release would be immediate news.

-

GitHub Copilot supported-models expansion: The docs page updated in the past four days suggests a model roster change is either live or imminent. Watch for GitHub's official announcement of new model options (potentially additional Anthropic or Google models) as Copilot continues its multi-model strategy.

-

SWE-rebench leaderboard updates: With 21,000+ continuously generated fresh Python tasks, SWE-rebench is the benchmark least susceptible to contamination gaming. Any significant score movement from Claude Code, Cursor's underlying models, or Copilot's backend would signal a real capability shift worth tracking.

Reader Action Items

-

Check your Copilot model settings today: GitHub updated its supported-models documentation within the past four days. Log into your Copilot settings and verify which models are available for chat vs. code completion on your current plan — you may have access to new options you haven't enabled yet.

-

Try Cursor's Automations feature if you're on the Pro plan: If you haven't yet explored event-triggered agents (Slack message → agent launch, codebase commit → agent review), this is the most differentiated capability in the current IDE market. Set up one automation this week and measure the time saved.

-

Run a personal satisfaction audit across your current tool: Given that 46% satisfaction for Claude Code and mixed signals across all three leaders, it's worth spending 20 minutes this week documenting your own friction points by category — model quality, IDE integration, pricing, agentic reliability — and comparing that against the three-tool landscape. The K21 Academy comparison framework is a good starting template.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.