AI in Healthcare Pulse — 2026-05-01

This week's standout story: a landmark Harvard-led trial published April 30 found an AI model outperforming emergency room doctors at diagnosis, sparking intense debate across medicine. Meanwhile, the FDA launched a groundbreaking pilot program to use AI in real-time clinical trials, and a pharma manufacturer received the agency's first-ever AI-misuse warning letter. Funding continues to flow into health AI at record pace.

AI in Healthcare Pulse — 2026-05-01

Regulatory & Policy Watch

1. FDA Launches AI-Enabled Clinical Trials Pilot Program

- What happened: The FDA published a formal Request for Information in the Federal Register on April 29, soliciting input on a proposed pilot program to assess how AI-enabled technologies can improve efficiency, speed, and quality of decision-making in early-phase clinical trials. The agency is explicitly exploring "real-time" clinical trials powered by cloud infrastructure and AI.

- Impact: If implemented, the pilot could compress timelines significantly — analysts suggest AI-enabled trial designs could reduce "20, 30, 40% of overall clinical" development time. This signals a fundamental shift in how the FDA itself thinks about drug and device evaluation, potentially opening faster approval pathways for manufacturers and delivering treatments to patients sooner.

2. FDA Issues First Warning Letter Targeting AI Misuse in Pharmaceutical Manufacturing

- What happened: In what GovPing reported as the FDA's first Warning Letter specifically targeting a drug manufacturer's improper use of AI in regulated product manufacturing and quality processes, the agency cited "AI overreliance" as a violation of Good Manufacturing Practice standards. The letter was issued around April 24.

- Impact: This is a watershed enforcement moment. Companies across pharma and biotech must now treat AI governance in manufacturing as a compliance priority — not just an operational one. Human oversight of AI-generated decisions in regulated workflows is now an explicit FDA expectation with enforcement teeth.

3. Penn LDI Analysis: Utah's AI Prescribing Law Exposes Fragmented Regulatory Landscape

- What happened: Researchers at the University of Pennsylvania's Leonard Davis Institute published an analysis of Utah's new law allowing an AI system to independently prescribe medications — examining the patchwork of state and federal regulations that govern (or fail to govern) such deployments.

- Impact: The analysis highlights a critical gap: as states move faster than federal agencies on AI prescribing authority, healthcare organizations and AI developers face a confusing, inconsistent regulatory environment. The piece serves as a cautionary framework for other states considering similar legislation.

Clinical Frontlines

Harvard Medical School / OpenAI — AI Outperforms Emergency Room Physicians at Triage Diagnosis

- The AI: An OpenAI "reasoning" model was evaluated in a simulated emergency room environment designed to replicate the fast pace and diagnostic complexity of real ER conditions.

- Results: The AI scored more than 11 percentage points higher than two human emergency room doctors on diagnostic accuracy. Multiple outlets — The Guardian, NPR, Science/AAAS, Harvard Magazine, Vox, and Gizmodo — all reported on the same study published April 30. Researchers described the results as marking a "profound change in technology that will reshape medicine."

- Significance: This is among the most rigorous real-world-like comparisons of AI versus physician diagnostic performance published to date. While experts caution that humans are still needed for treatment and that simulated conditions differ from live ER chaos, the magnitude of the performance gap is striking. The study adds significant momentum to the case for AI as a second-opinion diagnostic tool in high-acuity settings.

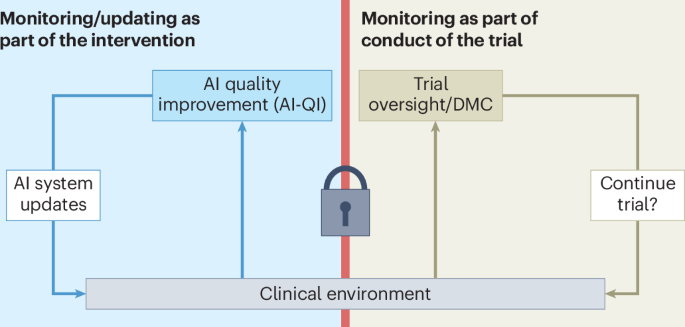

Nature Medicine — Clinical Trial Design Must Evolve for Continuously Updated AI Systems

- The AI: The framework addresses AI systems embedded in clinical workflows that are continuously monitored and updated post-deployment — a challenge traditional trial designs are not built to handle.

- Results: Published April 28 in Nature Medicine, the paper argues that standard randomized controlled trial frameworks are insufficient for evaluating AI tools that learn and change over time, and proposes new methodological approaches for ongoing monitoring.

- Significance: As hospitals and health systems deploy adaptive AI models, the absence of appropriate trial frameworks creates both patient safety and regulatory gaps. This paper provides a critical methodological foundation for the next generation of clinical AI validation.

ResMed (via AdvaMed) — Industry Dialogue on AI's Role in Digital Health Monitoring

- The AI: Carlos Nunez, Chief Medical Officer at ResMed, addressed key questions on how AI is shaping care delivery in respiratory and sleep medicine in AdvaMed's "Insight Series: AI & Digital Health," published April 24.

- Results: The interview provided an industry-practitioner perspective on AI integration in medical device workflows, addressing policy, clinical, and patient safety dimensions.

- Significance: Manufacturer-side clinical leadership is increasingly being asked to articulate how AI fits into regulated product ecosystems — a signal that the industry is moving from experimentation toward institutionalization of AI governance.

Funding & Deals

Earendel Labs — $787M (Largest Deal of Q1 2026)

- What they do: Deep learning drug discovery platform that has already generated 40+ therapeutic programs across disease areas.

- Investors: Not specified in available data.

- Why it matters: The sheer size of this round — the single largest digital health deal in Q1 2026 — signals that investors view AI-native drug discovery as a platform-scale opportunity, not just a tool. The fact that 40+ programs have already been generated suggests the platform is past proof-of-concept.

Iambic Therapeutics — Up to $1.7B (Takeda Commitment)

- What they do: AI-driven therapeutic development company focused on accelerating drug discovery timelines.

- Investors: Takeda Pharmaceutical committed up to $1.7 billion to the company.

- Why it matters: A Big Pharma company committing nearly $2 billion to an AI drug discovery firm is a defining moment for the sector. It validates AI-powered R&D at the highest level of the pharmaceutical industry and sets a precedent for future strategic partnerships between legacy pharma and AI-native biotech.

Coral — $12.5M Seed Round

- What they do: Healthcare administrative automation startup focused on reducing operational complexity in health system workflows.

- Investors: Led by Lightspeed Venture Partners and Z47.

- Why it matters: Healthcare administrative burden is estimated to cost the U.S. system hundreds of billions annually. Coral's seed round — led by top-tier VCs — reflects growing conviction that AI can meaningfully attack this overhead. Administrative AI may deliver faster ROI than clinical AI, making it a compelling near-term commercial bet.

Research Spotlight

"Is AI Actually Improving Healthcare?" — Nature Medicine Commentary

- Published in: Nature Medicine (Vol. 32, 2026), approximately one week ago

- Key finding: Authors Goldenberg and Wiens undertake a critical assessment of whether AI deployments in healthcare are producing measurable improvements in patient outcomes — not just benchmark performance. The commentary challenges the field to move beyond accuracy metrics and toward evidence of real-world clinical benefit.

- Clinical relevance: As health systems scale AI deployments, this framing is essential for procurement, evaluation, and accountability decisions. Clinicians and administrators need frameworks to distinguish AI tools that genuinely improve care from those that merely perform well in controlled settings.

"Clinical Trials for Continuously Monitored and Updated AI Systems" — Nature Medicine

- Published in: Nature Medicine, April 28, 2026

- Key finding: The paper argues that as AI becomes embedded in clinical workflows and continues to be updated post-deployment, existing trial methodologies cannot adequately evaluate their safety and efficacy. New frameworks for ongoing monitoring are needed.

- Clinical relevance: Hospitals deploying adaptive AI tools — from sepsis prediction to radiology — face the reality that the model they validate today may not be the model running six months from now. This research begins to define how institutions, regulators, and developers should manage that risk.

What to Watch Next Week

- FDA RFI comment period: The FDA's Request for Information on its AI-enabled clinical trials pilot is now open. Expect formal responses from major pharma companies, CROs, and patient advocacy groups in coming weeks — the first wave of responses will signal industry priorities.

- AI diagnostic deployment decisions: Following the Harvard/OpenAI ER study, expect major health systems to publicly comment on or accelerate pilots for AI-assisted emergency triage. Watch for hospital networks and EHR vendors to announce formal evaluation programs.

- State AI prescribing legislation: In the wake of the Penn LDI analysis of Utah's AI prescribing law, monitor whether other state legislatures introduce similar bills — or whether federal agencies signal intent to preempt state-level action.

- Q1 2026 funding data finalization: Rock Health's full Q1 2026 digital health funding report is expected to be widely cited and discussed. With $4–7.4B already reported (depending on methodology), watch for analysis breaking out AI-specific investment share.

Reader Action Items

-

For clinical leaders and hospital administrators: The Harvard/OpenAI ER diagnostic study is a forcing function. Begin or accelerate your institution's formal evaluation of AI-assisted triage and diagnosis tools — and establish the governance framework for how AI recommendations will be reviewed before acting on them. The regulatory and liability environment will reward proactive governance.

-

For pharmaceutical and biotech compliance teams: The FDA's first AI-misuse Warning Letter is a direct signal. Audit your current AI deployments in manufacturing and quality workflows now. Ensure human oversight mechanisms are documented, tested, and defensible under GMP standards before your next inspection.

-

For health-tech investors: The Takeda/$1.7B Iambic commitment and the Earendel Labs $787M round define the top of the AI drug discovery market. Focus due diligence on which platform-stage companies have the data assets and therapeutic program depth to command similar valuations — and which administrative AI startups (like Coral) offer the fastest path to measurable ROI.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.