AI in Healthcare Pulse — 2026-05-08

This week, the FDA launched a major internal AI upgrade with Elsa 4.0 while state legislatures push for patient disclosure requirements. A landmark Harvard study confirming AI outperforms emergency room physicians continues reverberating through clinical circles, and new research spotlights the evolving challenge of validating continuously updated AI systems. Funding activity remains robust, with AI-native revenue cycle and drug discovery platforms attracting serious capital.

AI in Healthcare Pulse — 2026-05-08

Regulatory & Policy Watch

- What happened: On May 6, 2026, the FDA announced the launch of Elsa 4.0, a major upgrade to its internal AI tool, made available to all FDA staff across scientific and administrative functions. The agency simultaneously completed a broader data platform consolidation, part of what it describes as a "bold initiative to modernize the agency."

- Impact: Elsa 4.0 signals a deepening commitment to AI-powered operations inside the FDA itself — not just in the products it reviews. This could accelerate regulatory review timelines and reshape how FDA scientists process submissions and safety data, with downstream effects on the pace of AI medical device approvals.

- What happened: A Mondaq legal analysis published May 6, 2026 highlights that over 250 AI-related healthcare bills were introduced in state legislatures in 2025, and the Trump Administration has proposed a unified federal AI framework for healthcare. The analysis frames AI compliance as "the new compliance frontier" for health systems and vendors.

- Impact: The proliferation of state-level bills creates a fragmented compliance environment for AI healthcare companies operating across state lines. A federal framework, if enacted, could simplify compliance but may also preempt stricter state protections — a tension healthcare legal teams are actively navigating.

- What happened: Pennsylvania legislators introduced bills that would require mandatory disclosure of AI use in healthcare settings. As reported May 7, 2026, state lawmaker advocates argue patients "deserve to know" when AI is involved in their care decisions, from diagnostics to treatment recommendations.

- Impact: If passed, Pennsylvania's disclosure legislation could become a model for other states. For health systems and AI vendors, it raises practical questions about workflow integration of disclosure obligations and potential liability for non-disclosure.

- What happened: The American Medical Association is demanding further legislation to address AI-driven medical misinformation, including deepfake videos impersonating physicians and AI-generated health fraud content that erodes public trust in medical institutions, according to reporting published May 7, 2026.

- Impact: The AMA's push adds institutional weight to calls for targeted AI content regulation in healthcare. Without action, health professionals warn that patient trust — a foundational asset for the healthcare system — could be systematically undermined by synthetic media.

Clinical Frontlines

Harvard/Mass General — AI Outperforms Emergency Physicians in Real-World Triage Study

%2Fhttps%3A%2F%2Ftf-cmsv2-smithsonianmag-media.s3.amazonaws.com%2Ffiler_public%2F11%2Fa7%2F11a750e6-c82c-4b27-8fdb-8a14f17be4fa%2Fthe_emergency_entrance_at_erlanger_western_carolina_hospital_in_peachtree_north_carolina.jpg)

- The AI: OpenAI's large language model was evaluated on real emergency room patient cases for diagnostic accuracy and clinical decision-making tasks including triage and patient management recommendations.

- Results: The AI model outperformed human emergency physicians on most medical reasoning tasks in the study. Specifically, it achieved more accurate diagnoses than two human doctors in real-world ER cases, according to Harvard researchers.

- Significance: Researchers described the results as representing "a profound change in technology that will reshape medicine." While experts caution this does not mean AI will replace clinicians, it strongly supports the case for AI-assisted emergency triage as a near-term clinical reality. The study continues to generate coverage and debate across medical and technology media this week.

Euronews Health / Research Teams — AI Models Rival Doctors on Complex Medical Reasoning

- The AI: Multiple AI models were evaluated on complex medical reasoning benchmarks spanning diagnostics to patient management advice.

- Results: Researchers found that an AI model outperformed human doctors "on most medical reasoning tasks" across a broad set of clinical scenarios, published May 5, 2026.

- Significance: This is a distinct study from the Harvard ER trial, reinforcing a growing body of evidence that large language models are crossing clinical competency thresholds. The convergence of multiple independent studies this week is accelerating board-level conversations about AI integration at health systems globally.

HIT Consultant / Oncology AI — Addressing the "Intelligence Gap" in Cancer Care

- The AI: A proposed "Medical Intelligence Layer" using a Multi-LLM ensemble architecture is being advanced as a framework for oncology clinical workflows.

- Results: The analysis, published May 5, 2026, argues that oncologists are drowning in data while AI-native approaches using multi-model systems can reduce burnout and close the gap between available evidence and treatment decisions.

- Significance: The piece highlights a structural challenge in AI clinical adoption: individual models may match or beat physicians on benchmarks, but the workflow integration layer — aggregating, prioritizing, and surfacing insights — remains an unsolved engineering and organizational problem, especially in oncology.

Funding & Deals

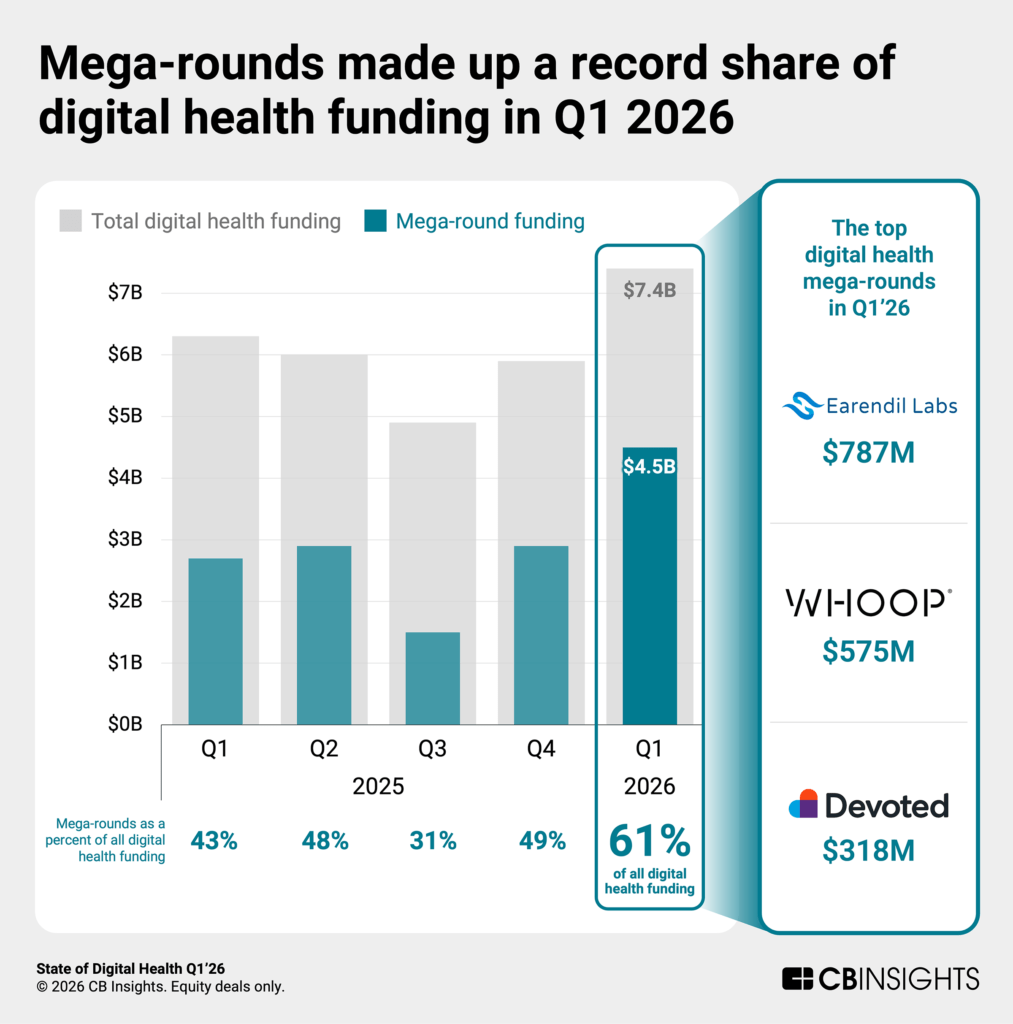

Earendil Labs — $787M (Largest Deal of Q1 2026)

- What they do: Deep learning platform that generates therapeutic programs; has already produced 40+ drug candidates.

- Investors: Not specified in available data.

- Why it matters: At $787M, this was the single largest digital health deal of Q1 2026 and reflects explosive investor confidence in AI-native drug discovery platforms. The speed at which Earendil has generated therapeutic programs — leveraging deep learning rather than traditional discovery workflows — signals a structural shift in early-stage pharmaceutical R&D.

Iambic Therapeutics — Up to $1.7B (Takeda Partnership Commitment)

- What they do: AI-driven drug discovery company focused on accelerating the path from target identification to clinical candidate.

- Investors: Takeda Pharmaceutical committed up to $1.7B.

- Why it matters: Takeda's commitment is one of the largest pharma-to-AI-startup deals on record and underscores that Big Pharma is moving from pilot programs to transformational bets on AI drug discovery. The "timeline compression" framing — AI reducing development cycles from years to months — is now a core investment thesis for major pharmaceutical acquirers.

Amperos Health — $16M Series A

- What they do: AI-native revenue cycle management startup automating denial management for health systems.

- Investors: Lead investors not specified in available data.

- Why it matters: Revenue cycle management is one of healthcare's most costly administrative burdens, with denial rates climbing industrywide. Amperos's AI-native approach — built from the ground up for automation rather than bolted onto legacy RCM systems — represents a growing category of "horizontal AI infrastructure" plays that investors are backing as the operational backbone of future health systems.

Research Spotlight

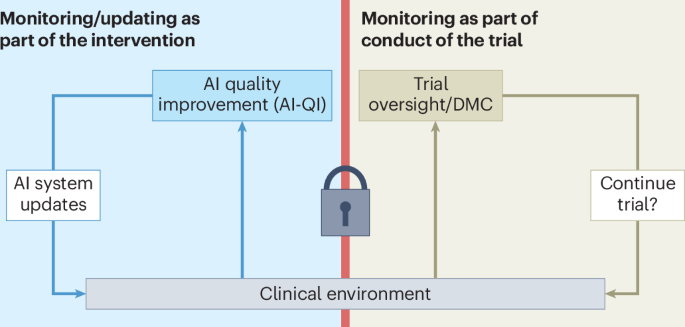

Clinical Trials for Continuously Monitored and Updated AI Systems

- Published in: Nature Medicine (2026)

- Key finding: As AI becomes embedded in clinical workflows, traditional randomized controlled trial designs are inadequate for systems that continuously learn and update. The paper argues that trial frameworks must accommodate ongoing monitoring and versioning of AI systems to maintain evidentiary validity.

- Clinical relevance: This is one of the most practically urgent research problems in clinical AI. Health systems deploying adaptive AI tools — whether for imaging, triage, or risk scoring — currently have no standardized framework for ensuring that a model update doesn't degrade validated performance. The paper provides a foundation for regulators, IRBs, and clinical AI teams to develop new protocols.

Is AI Actually Improving Healthcare?

- Published in: Nature Medicine (Nat Med 32, 1182–1183, 2026)

- Key finding: Authors Goldenberg and Wiens directly interrogate the evidence base for clinical AI benefit, arguing that despite impressive benchmark performance, rigorous evidence of real-world patient outcome improvement remains sparse and methodologically challenged.

- Clinical relevance: Published approximately two weeks ago and still resonating this week alongside the Harvard ER study, this commentary provides essential counterweight to benchmark-driven enthusiasm. For hospital executives evaluating AI procurement, it reinforces the need for site-specific validation studies and outcome tracking rather than reliance on published benchmarks alone.

What to Watch Next Week

- Pennsylvania AI disclosure bills: Committee votes or public hearings on the proposed legislation could set the pace for similar measures in other states. Watch for health system lobbying activity and AMA positioning.

- Federal AI healthcare framework: With the Trump Administration's unified framework proposal now widely reported, expect congressional staff briefings and initial draft language to surface — potentially reshaping the 250+ fragmented state-level bills.

- FDA Elsa 4.0 early feedback: As all FDA staff begin using the upgraded internal AI tool, early signals about productivity changes in the review pipeline could emerge through industry contacts and FDA meeting request patterns.

- Post-Harvard ER study clinical response: Expect major academic medical centers and emergency medicine professional societies to issue formal responses or pilot program announcements in the wake of the Harvard/OpenAI diagnostic study's continued media momentum.

Reader Action Items

-

For health system and hospital CIOs: The Nature Medicine commentary on whether AI is actually improving healthcare outcomes is required reading before your next AI vendor evaluation. Demand site-specific outcome data, not just published benchmark citations — and build post-deployment monitoring into every AI procurement contract now, before adaptive model update cycles create untracked performance drift.

-

For healthcare AI companies operating multi-state: The Mondaq compliance analysis is a practical starting point for auditing your current state-level disclosure and regulatory exposure. With 250+ state bills in play and a federal framework potentially consolidating the landscape, now is the time to map your obligations and engage proactively with regulatory counsel before enforcement actions begin.

-

For clinical AI researchers and IRBs: The Nature Medicine paper on trial design for continuously updated AI systems should be circulated to your IRB and research committee now. If you are running or planning trials involving adaptive AI systems, your current protocol likely lacks adequate provisions for model versioning and update monitoring — a gap that could invalidate study results and create liability.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.