에이전트 가드레일 경쟁 격화: GPT-5.2·Qwen3·Gemini-3와 LlamaGuard의 정면 대결

This week in agent harness engineering brought three major developments: AgentDoG research paper (released two days ago) benchmarking guardrail models like LlamaGuard4, GPT-5.2, Gemini-3-Flash, and Qwen3 side-by-side; the launch of awesome-harness-engineering repository and VoltAgent's 2026 AI agent papers collection; and fresh production insights showing that removing 80% of tools outperforms any model upgrade. The key takeaway: tool minimalism beats model scaling, multi-layered guardrail architectures are becoming standard practice, and CodeAct's code-execution-first approach cuts agent turns by 20%.

Agent Harness Engineering Weekly Report — 2026-04-25

Scope note: This report covers AI Agent Harness Engineering — the software scaffolding, orchestration frameworks (LangGraph, DSPy, CrewAI, AutoGen, Claude Agent SDK, OpenAI Agents SDK), tool-use patterns, guardrails, memory systems, and evaluation infrastructure for production LLM agents. It is NOT about physical wire harnesses, cabling, or automotive electrical systems.

This Week's Headlines

- AgentDoG guardrail framework goes public on ArXiv — A diagnostic guardrail framework for AI agent safety and security dropped two days ago, benchmarking LlamaGuard4, GPT-5.2, Gemini-3-Flash, Qwen3-235B, and eight other leading guard models head-to-head.

- awesome-harness-engineering repository launches — The ai-boost organization open-sourced a comprehensive resource list for AI agent harness engineering yesterday, covering everything from tools and patterns to self-evolving harnesses (where agents modify their own scaffolding).

- VoltAgent releases 2026 AI agent papers curated collection — A hand-picked repository of cutting-edge agent engineering, memory, evaluation, and workflow papers went live three days ago and is already gaining traction.

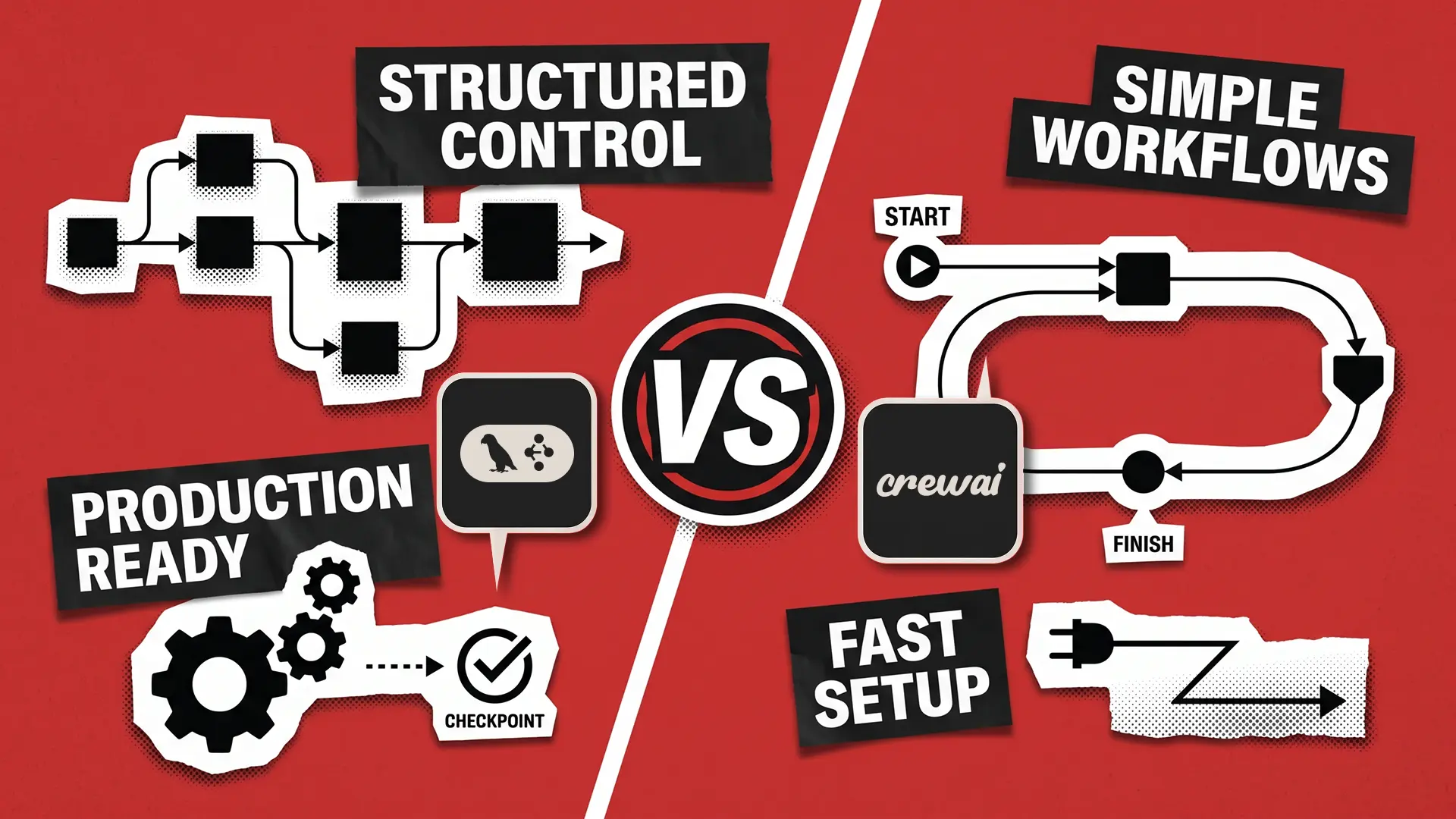

- LangGraph vs. CrewAI production showdown published — Redwerk's blog dropped a real-world framework comparison two days ago, backed by actual cost data, debugging workflows, crash recovery, and migration paths.

Framework & Tooling Updates

Uvik Software — 2026 Best Agentic AI Frameworks Guide (Updated 2 days ago)

- What's new: A comprehensive comparison of major frameworks for autonomous AI agents—LangGraph, CrewAI, AutoGen, OpenAI Agents SDK—covering architecture, memory systems, and multi-agent orchestration.

- Why it matters: Goes beyond benchmarks to show real trade-offs when you're deploying to production. Offers concrete guidance on memory management, context extension strategies, and selection criteria based on team size and task type.

- Migration notes: Includes practical steps for moving existing LangChain codebases to LangGraph.

Redwerk — LangGraph vs. CrewAI 2026 Production Survival Guide (2 days ago)

- What's new: In-depth comparison grounded in actual production deployment experience. Includes real cost data, debugging approaches, crash recovery mechanisms, and clear migration paths between the two frameworks.

- Why it matters: Gives you actual operational data—not lab numbers—so you can make informed long-term cost and maintainability decisions when choosing your framework.

- Migration notes: Details API differences and state management pattern shifts you need to watch when moving from CrewAI to LangGraph or vice versa.

Research & Evaluation

AgentDoG: Diagnostic Guardrail Framework for AI Agent Safety and Security

- Authors / Org: ArXiv submission, published two days ago

- Core finding: AgentDoG benchmarks eight dedicated guard models (LlamaGuard3-8B, LlamaGuard4-12B, Qwen3-Guard, ShieldAgent, JoySafety, ShieldGemma, PolyGuard, NemoGuard) alongside three general-purpose LLMs (GPT-5.2, Gemini-3-Flash, Qwen3-235B-A22B). It proposes an integrated framework that diagnoses agent safety and security simultaneously.

- Implication for harness design: Single-model guard dependencies are now obsolete. Multi-layered guard model combinations should be standard practice. The fact that GPT-5.2, Qwen3, and Gemini-3 now compete directly with dedicated guard models opens the door to architectures that use general-purpose models as guardrails—a design choice that trades isolation for cost and latency savings.

LLM-Agent-Harness-Survey (HuggingFace Dataset)

- Authors / Org: GloriaaaM / HuggingFace

- Core finding: Vercel's team discovered in controlled experiments that removing 80% of an agent's tools outperformed any model upgrade. Schema-first tool contracts (like OpenAPI specs) reduce interface misuse but not semantic misuse. Removing tools proved far more effective than improving tool design alone. CodeAct (code execution as primary action) achieved 17/17 MINT benchmark tasks with 20% fewer turns.

- Implication for harness design: Tool minimalism is the real performance lever. Schema design is necessary but not sufficient. You need a separate runtime validation layer. Measuring actual tool call frequency and error rates before adding new tools should be standard practice.

Building AI Coding Agents: Scaffolding, Harness, Context Engineering, and Lessons

- Authors / Org: ArXiv, released March 2026

- Core finding: Proposes registry-based tool architecture, delayed-discovery external tools via MCP, and a five-layer safety stack: prompt-level guardrails → schema-level tool gating → runtime approval systems → tool-level validation → custom lifecycle hooks.

- Implication for harness design: Dual-agent separation (one agent for schema reasoning, another for execution) at the schema-level tool-gating layer is a practical, production-ready pattern. Much more robust isolation than single-agent-with-all-permissions. Enforcing constraints incrementally at lower abstraction levels is the way forward.

Production Patterns & Practitioner Insights

Removing 80% of Tools Beats Any Model Upgrade

- Context: Vercel's production AI agent team discovered this while debugging flagging task success rates.

- Problem: Model upgrades weren't moving the needle on agent performance. Suspicion grew that a bloated tool set was polluting context.

- Solution / Takeaway: Cutting 80% of tools delivered more performance gains than any model upgrade. The lesson: tool minimalism is architecture. Measure actual tool call frequency and error rates before adding more. Most production teams should audit their current toolkit first, not reach for a newer model.

Self-Evolving Harnesses: Agents That Rewrite Their Own Scaffolding

- Context: Latest meta-harness pattern documented in the ai-boost/awesome-harness-engineering repository.

- Problem: Static harnesses force agents to use fixed strategies even when task types demand different approaches.

- Solution / Takeaway: Agents can modify their own prompts, tools, and strategies based on execution history. This is the long-term direction for harness design. Near-term wins: dynamic tool prioritization based on execution logs. Critical caveat: self-modification must operate within clearly defined boundaries to remain safe.

CodeAct: Code Execution as Primary Agent Action Cuts Turns by 20%

- Context: Systematic comparison of CodeAct vs. traditional tool-calling on the MINT benchmark.

- Problem: Complex multi-step tasks waste context windows with excessive back-and-forth turns.

- Solution / Takeaway: Making code execution a first-class agent action wins on all 17 MINT tasks and cuts turns by 20%. Integrate a code interpreter as a Tier-1 tool, pair it with robust sandbox security and isolation strategy, and you get measurable efficiency gains.

Trending OSS Repositories

- ai-boost/awesome-harness-engineering — Purpose-built resource list for AI agent harness engineering; covers tools, patterns, evaluation, memory, MCP, permissions, observability, orchestration, and self-evolving harnesses. Launched yesterday and already drawing attention.

- VoltAgent/awesome-ai-agent-papers — 2026 curated papers on agent engineering, memory, evaluation, workflows, and autonomous systems. Published three days ago.

- masamasa59/ai-agent-papers — Bi-weekly AI agent papers collection; includes scaffolding, harness, and context engineering papers for terminal coding agents.

Deep Dive: AgentDoG — The New Era of Guardrail Model Competition

Released on ArXiv two days ago, AgentDoG is now the most talked-about piece of research in the agent harness community. It proposes a unified diagnostic framework for simultaneous evaluation of AI agent safety and security.

The breakthrough is scope. AgentDoG doesn't just benchmark dedicated guard models (LlamaGuard3-8B, LlamaGuard4-12B, Qwen3-Guard, ShieldAgent, JoySafety, ShieldGemma, PolyGuard, NemoGuard). It also runs general-purpose models—Gemini-3-Flash, GPT-5.2, Qwen3-235B-A22B-Instruct—through the same evaluation. This answers a practical question: "Could a general-purpose LLM as a guardrail outperform a dedicated guard model?"

Why this matters for harness architecture: Traditionally, production agent systems deploy dedicated guard models (LlamaGuard family) as a separate orchestration layer. But if GPT-5.2 and Qwen3 now match or exceed dedicated guard quality, the calculus changes. You could embed guardrails directly in your main model, skip the extra serverless endpoint, and pocket savings in cost and latency.

The tradeoff is real. Context pollution, prompt injection bypasses, and isolation failures when one model tries to both reason and guard are legitimate risks. AgentDoG's "diagnostic" approach—separating safety and security measurements—gives you a quantitative way to weigh these risks.

Two practical lessons for production harness designers: First, it's time to reduce single-model guard dependency and experiment with multi-layered configurations. Second, the choice between dedicated vs. general-purpose guardrails is now a mature architectural decision to be made case-by-case based on cost, latency, and isolation requirements.

What to Watch Next Week

- Full AgentDoG paper benchmarks — Detailed metrics and experimental conditions may shift how teams choose guardrail models.

- awesome-harness-engineering self-evolving patterns documentation — As this repo (launched yesterday) matures and receives contributions, self-modification harness safety boundaries will crystallize, raising production viability.

- Redwerk's detailed cost breakdown — Additional data on large-scale concurrent scenarios will inform framework selection for scaled deployments.

Reader Action Items

- Audit your tool set immediately: Follow Vercel's lead. Measure actual call frequency and error rate per tool on your current agents. Prioritize removing the bottom 20% before considering any model upgrade.

- Review multi-layered guardrail architecture: Use AgentDoG results to pressure-test single-guard setups. Experiment with combining general-purpose LLM guardrails (Qwen3-235B, GPT-5.2) with dedicated models on your real workload.

- Pilot CodeAct patterns: Test code execution as a first-class agent action on multi-step tasks. Pair it with solid sandbox security design and measure turn reduction.

- Follow awesome-harness-engineering: This repo (launched yesterday) is becoming the practical playbook for harness patterns. Watch the self-evolving harness section and start with small, low-risk experiments internally.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.