Agent Harness Engineering Weekly Report — May 7, 2026

This week in agent harness engineering, a deep-dive guide emerged on build-vs-buy decisions for multi-agent orchestration platforms, while a comparative security guardrails study hit arXiv testing DKnownAI Guard against AWS Bedrock, Azure Content Safety, and Lakera. HuggingFace flagged evaluation costs as the new compute bottleneck, and a fresh community-driven OSS resource, `awesome-harness-engineering`, just landed on GitHub two days ago. With limited new releases this week, this report sticks to verified, actionable insights.

Agent Harness Engineering Weekly Report — May 7, 2026

Scope note: This report covers AI Agent Harness Engineering — the software scaffolding, orchestration frameworks (LangGraph, DSPy, CrewAI, AutoGen, Claude Agent SDK, OpenAI Agents SDK), tool-use patterns, guardrails, memory systems, and evaluation infrastructure for production LLM agents. It is NOT about physical wire harnesses, cabling, or automotive electrical systems.

This Week's Headlines

- Multi-Agent Orchestration Platforms Broken Down Into 5 Build-vs-Buy Layers — Augment Code released a 2026-era guide decomposing seven major platforms (LangGraph, CrewAI, and others) across five independent layers to map team fit (posted 3 days ago).

- AI Agent Security Guardrails Comparative Study Goes Live on arXiv — Researchers benchmarked DKnownAI Guard against AWS Bedrock Guardrails, Azure Content Safety, and Lakera Guard in real agent scenarios roughly a week ago.

- HuggingFace Blog: "AI Evals Are Becoming the New Compute Bottleneck" — An analysis post from about a week ago tackles surging eval costs (covering ResearchGym from ICLR 2026) and stresses the importance of evaluation infrastructure design.

awesome-harness-engineeringGitHub Repository Just Launched — A new community awesome list dedicated to agent harness engineering, spanning tools, patterns, evals, memory, MCP, permissions, observability, and orchestration—even covering meta-harness patterns where agents tweak their own scaffolding (added 2 days ago).

Framework & Tooling Updates

LangGraph — Stateful AI Agent Orchestration in Practice (Updated 2 Days Ago)

- What's new: A hands-on tutorial on pyshine.com covering LangGraph's graph-based orchestration, checkpointing, and human-in-the-loop patterns has been refreshed. It digs into state persistence, conditional edges, and checkpoint-based rollbacks—all production-ready patterns.

- Why it matters: LangGraph uses graph structures to bring explicit state management to complex multi-step agent workflows. This unlocks workflow visualization, human handoff at intermediate checkpoints, and error recovery. Debugging and auditability in production jump dramatically.

- Migration notes: Converting existing LangChain chains to LangGraph requires refactoring into nodes and edges, so factor in upfront rework.

Multi-Agent Orchestration Platforms — 7 Frameworks Compared (3 Days Ago)

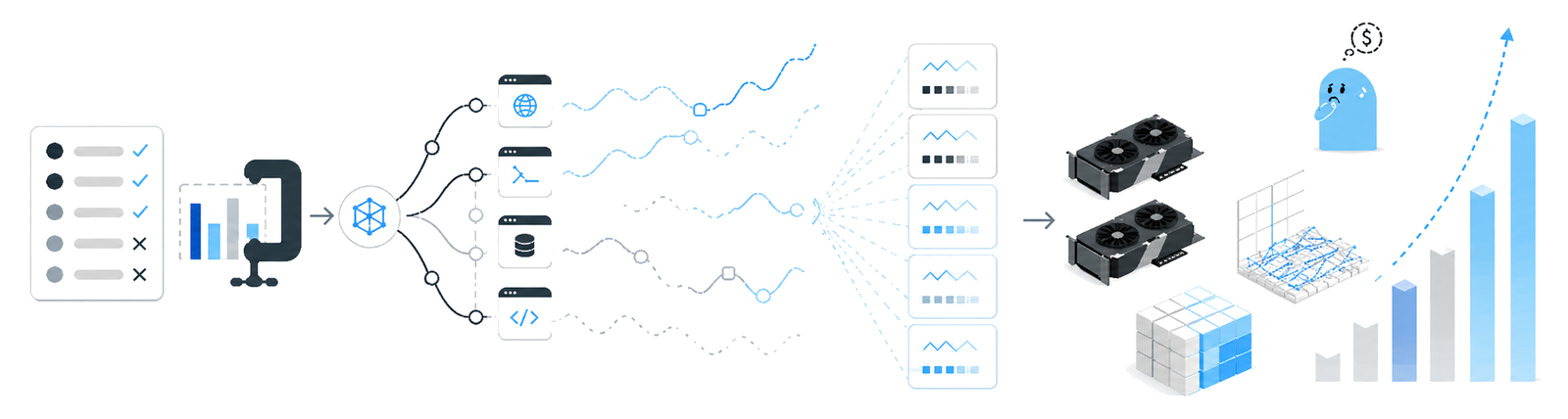

- What's new: Augment Code released a 2026 guide comparing seven multi-agent orchestration platforms from a build/buy/hybrid lens. The core insight: orchestration splits into five distinct layers.

- Why it matters: Cramming all layers into a single tool is inefficient. The guide shows with real data that the best tool for each layer depends on team size, tech stack, and SLA needs—a practical lens for architecture decisions.

- Migration notes: Migration paths from monolithic agent stacks to layered designs are included and can be rolled out incrementally.

Research & Evaluation

A Comparative Evaluation of AI Agent Security Guardrails (arXiv:2604.24826)

- Authors / Org: Independent research team (DKnownAI-affiliated)

- Core finding: DKnownAI Guard was benchmarked against three competitors—AWS Bedrock Guardrails, Azure Content Safety, and Lakera Guard—in real AI agent security scenarios. The evaluation zeroes in on agent-specific attack vectors: prompt injection, escape attempts, and harmful output generation.

- Implication for harness design: Guardrails must embed as multi-layered defense across the entire agent execution loop, not just surface-level filtering. Harness design should layer three security tiers: prompt-level, schema-level, and runtime-level safeguards. Betting on a single solution leaves blind spots.

AI Evals Are Becoming the New Compute Bottleneck (HuggingFace Blog)

- Authors / Org: HuggingFace Research Team

- Core finding: Modern agent eval benchmarks—including ResearchGym (ICLR 2026)—span 39 subtasks drawn from ACL, ICLR, and ICML papers, asking agents to perform real ML research. Eval execution costs now rival LLM training costs.

- Implication for harness design: Evaluation infrastructure must be a first-class design concern. Eval cost optimization—caching, parallel runs, partial evals—has become a top priority for production agent systems. The evaluation harness itself is evolving into its own engineering domain.

Building AI Coding Agents for the Terminal: Scaffolding, Harness, Context Engineering (arXiv:2603.05344)

- Authors / Org: Terminal agent research team

- Core finding: The paper documents a registry-based tool architecture with MCP-powered lazy tool discovery, plus a five-tier safety architecture (prompt-level guardrails → schema-level tool gating → runtime approval → tool-level validation → custom lifecycle hooks) applied to coding agents. Published in March, it stands as validated recent prior work.

- Implication for harness design: Dual-agent separation for schema-level tool gating shrinks the attack surface far more than unrestricted tool access. The five-tier safety architecture serves as a direct reference for production coding agent harness design.

Production Patterns & Practitioner Insights

Six Multi-Agent Orchestration Patterns with Python/CrewAI (2 Days Ago)

- Context: Knowlee AI released a 2026 hands-on guide for agent fleet orchestration, complete with CrewAI Python examples, cron-based automation registry operations, and observability setup.

- Problem: As multi-agent systems grow complex, managing inter-agent dependencies, restarting failed agents, and tracking global state become hard. Without a unified registry for cron automation and agent lifecycle, operational overhead balloons.

- Solution / Takeaway: Deploy an automation registry to centrally manage agent scheduling, retries, and state. Design a dedicated observability layer to collect per-agent execution logs and success/failure metrics, then wire up alerting for failures. Knowlee's reference architecture gives teams immediately actionable patterns.

AI Agent Framework Scorecard: Cost, Latency, and Production Readiness (3 Days Ago)

- Context: nadcab.com published a scorecard comparing major agent frameworks (LangGraph, Google ADK, AWS Strands, AutoGen, CrewAI) on cost, latency, and production readiness.

- Problem: Framework selection often stops at feature checklists, overlooking cost efficiency and latency traits that matter most in production.

- Solution / Takeaway: Evaluate production agent stacks across three axes beyond feature parity: token cost, call latency, and failure recovery. For long-running agents especially, context compression and checkpointing strategies heavily impact costs.

Trending OSS Repositories

- ai-boost/awesome-harness-engineering — A dedicated awesome list for agent harness engineering, covering tools, patterns, evals, memory, MCP, permissions, observability, and orchestration. Even includes meta-harness patterns where agents evolve their own scaffolding (added 2 days ago).

- tmgthb/Autonomous-Agents — A curated archive of autonomous agent research papers; includes Petri-net-based custom eval scaffolds and eval methods using real codebases in Docker (updated daily).

- VoltAgent/awesome-ai-agent-papers — AI agent research papers from 2026, organized by agent engineering, memory, evals, workflows, and autonomous systems (added 2 weeks ago).

Deep Dive: AI Agent Security Guardrails Comparative Study — What Harness Architects Need to Know

In agent harness engineering, security is no longer an afterthought. The newly published arXiv paper "A Comparative Evaluation of AI Agent Security Guardrails" represents one of the first systematic head-to-head comparisons of DKnownAI Guard against AWS Bedrock Guardrails, Azure Content Safety, and Lakera Guard in actual agent scenarios.

The study matters not because it's another generic content safety benchmark, but because it stress-tests agent-specific attack vectors—prompt injection, role escape, tool misuse, chain-of-thought bypass—within real agent execution loops. Legacy LLM content filters test one-shot input-output pairs; agent environments spawn multi-turn loops where tool outputs feed back into the next reasoning step. Attacks within this loop are harder to catch with single-layer guardrails.

Earlier work (arXiv:2603.05344, "Building AI Coding Agents for the Terminal") proposed a five-tier safety architecture: ①prompt-level guardrails → ②schema-level tool gating (via dual-agent separation) → ③runtime approval systems (including permanent permissions) → ④tool-level validation → ⑤custom lifecycle hooks. This new comparative study empirically measures how well external guardrail solutions cover tiers ① and ④ within agent loops.

Three takeaways for harness architects stand out. First, single-vendor guardrail reliance is risky. Cloud guardrails from AWS, Azure, and Lakera optimize for generic content safety; they can have blind spots against complex multi-turn agent attacks. Second, defense-in-depth is mandatory. Combine external guardrails with internal schema validation and runtime approval. Third, threat modeling per agent is essential. Coding agents and search agents have different attack surfaces, so nail down your threat model at harness design time.

On integration patterns, the most practical near-term approach is to annotate each tool in MCP registration with explicit permission scopes, then enforce schema validation at runtime to block unauthorized calls. External guardrails become a supplementary layer, not the main line of defense.

What to Watch Next Week

- Agent Evaluation Cost Optimization Tools — With HuggingFace flagging eval cost as the new bottleneck, expect new dedicated eval infra tools or framework updates supporting caching, partial evals, and parallel runs. Watch for ResearchGym and other benchmarks going open-source.

awesome-harness-engineeringRepository Growth — Track how quickly this two-day-old repo absorbs community contributions and whether reference implementations for meta-harness patterns (agents tweaking their own scaffolding) get added.- Five-Layer Orchestration Framework Adoption — Monitor whether Augment Code's five-layer decomposition gets cited and extended in other practitioner blogs and conference talks—a sign of industry consensus on architecture mental models.

Reader Action Items

- Conduct a Guardrail Audit: Cross-check your agent system's security layers against arXiv:2603.05344's five-tier safety architecture. Flag any gaps, especially schema-level tool gating and dual-agent separation. If you're leaning on a single external guardrail, remediate now.

- Budget for Evaluation Costs: Reference the HuggingFace report to carve out eval execution as a separate budget line. Design evaluation caching strategies and incremental eval pipelines. Eval costs will soon outpace inference.

- Map Orchestration Layers: Use Augment Code's five-layer model (routing, state, tool execution, memory, observability) to inventory which tools own each layer in your stack. Assess operational drag from single tools spanning multiple layers.

- Bookmark and Contribute to

awesome-harness-engineering: Add this new awesome list to team reference materials. Consider submitting your own patterns or case studies as the community knowledge base grows fast.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.