AI 버블 아닐 수도 — Claude Code가 실제 수익으로 증명한다

The White House is shifting away from strict AI model regulation toward a "partnership" approach with companies, while AI agents like Claude Code are beginning to demonstrate tangible revenue contributions—easing concerns about an AI bubble. Meanwhile, developer communities are actively debating how product managers should adapt to AI acceleration and what truly qualifies as a "real AI agent."

Global AI Trends Brief — May 8, 2026

1. Notable Tech Announcements & News

🏛️ White House Officially Embraces "Partnership" Over AI Regulation

The White House is backing away from stricter AI model regulation, officially announcing a preference for collaborative "partnerships" with companies instead. A senior White House official stated that the government is pursuing "partnership" rather than "regulation."

This marks a shift from earlier reporting on April 4 by The New York Times that the government was considering pre-launch review processes for new AI models—signaling reduced regulatory uncertainty in the AI sector. Ahmed Hamza, a computer scientist at the University of Colorado, notes that "once powerful AI models are deployed, preventing misuse becomes nearly impossible, making safety a top priority."

💰 "It May Not Be an AI Bubble" — Claude Code Proves It With Revenue

The Atlantic published analysis arguing that "AI revenue is finally catching up to the hype" thanks to the rise of Claude Code and other AI agents—a potential turning point in the AI bubble debate.

AI coding agents like Anthropic's Claude Code are starting to tie into real corporate revenue streams, providing counterevidence to skeptics who questioned AI's return on investment relative to hype. At POSSIBLE 2026, an industry conference for advertising professionals, experts were notably focused on moving beyond AI hype toward practical applications.

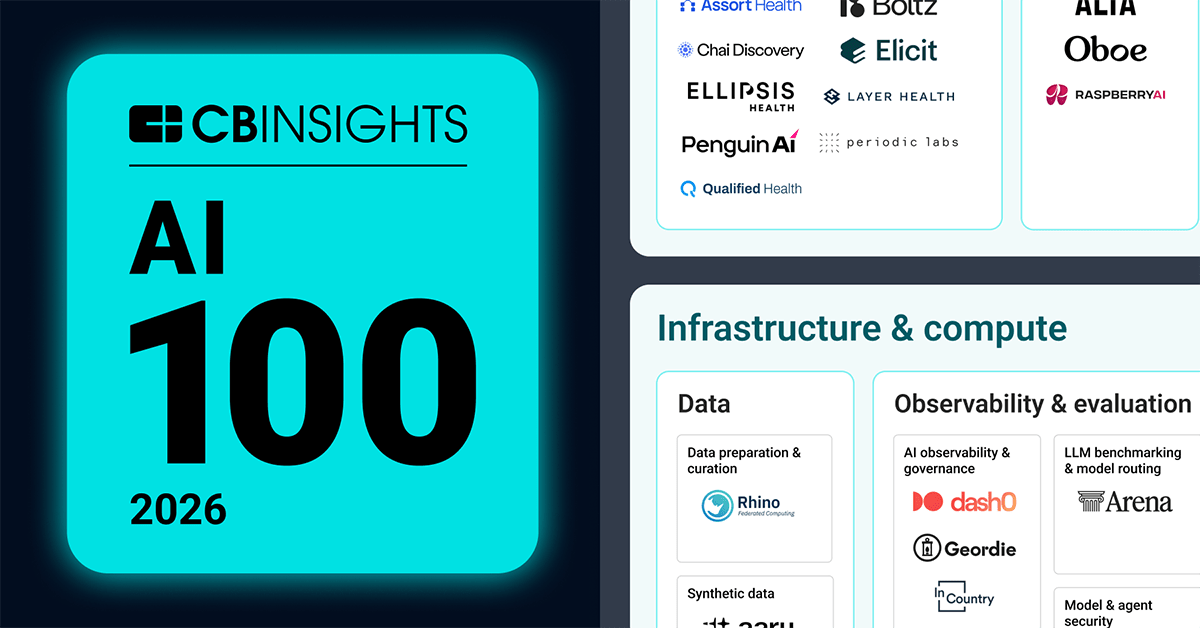

🧠 CB Insights Releases 'AI 100' — 2026's Most Promising AI Startups

CB Insights released its 'AI 100' list, now in its 10th year. The report identifies emerging AI companies across infrastructure, enterprise applications, and industry-specific use cases based on CB Insights' predictive signals, examining how AI is reshaping thinking and decision-making across sectors.

2. Today's Featured Papers & Research

Note: A Hugging Face Daily Papers screenshot as of May 8, 2026 was obtained, but individual paper titles, authors, and summaries could not be directly verified from it. Below is research-related information confirmed within the last 24 hours.

AI Safety Research — Post-Launch Control Is Practically Impossible

Ahmed Hamza, a computer scientist at the University of Colorado, published analysis via The Conversation showing that preventing misuse of powerful AI models after deployment is "nearly impossible." This underscores the importance of building pre-deployment safety verification frameworks.

GCRI 'AI Risk and Strategy 2026' Report

The Global Catastrophic Risk Institute (GCRI) evaluated AI progress over the past year as "substantial but uneven" in its early 2026 report on AI risks and strategy. Extreme short-term AI scenarios remain unlikely but not impossible.

Deepfake AI Threat — Warning of Public Discourse Distortion

Crescendo AI, an AI news curation platform, aggregated expert warnings that the spread of deepfake visual media poses "serious threats to public discourse." Experts worry such content could manipulate market sentiment or incite social unrest before official rebuttals can circulate.

3. Community & Expert Insights

💬 "How Do PMs Survive in the AI Acceleration Era?"

A Hacker News thread from two days ago asks how product teams are keeping pace with accelerated engineering output from AI—sparking active debate. The core tension: detailed specification writing was appropriate when engineering was the bottleneck, but now specs are becoming deployment delays. Contributors report frequent cases where rushing to reduce rework costs ends up causing bigger losses.

💬 "What Actually Makes a Real AI Agent?"

Another Hacker News discussion centers on defining "true autonomous AI agents." The key criticism: a workflow that checks incoming emails, reviews PDFs, and inputs data into Google Sheets isn't an AI agent—it's just "a workflow with LLM calls as nodes." Participants are actively debating where the line between genuine autonomy and simple automation should be drawn.

💬 AI Tools Enable "More Attempts," Not Just "Faster Speed"

A Hacker News comment posted 19 hours ago is gaining traction. It captures real user experience: AI tools don't feel like "going faster"—they feel like "opening the possibility to try more without tedious grunt work." In visual work like UI/UX, the pattern involves using sketches to filter bad ideas and refine good ones.

4. AI Trends to Watch

AI Agent Monetization — The Key to Resolving the Bubble

Following The Atlantic's analysis, as AI coding agents like Claude Code begin delivering measurable revenue value, AI agent monetization models are emerging as the industry's next focal point. Which agent types generate ROI in which industries will become the decisive metric shaping future investment and development priorities.

AI Governance — Pre-Validation vs. Post-Launch Partnership

The White House's pivot toward "partnership" could influence global AI safety policy. According to CU Boulder experts, "post-deployment control is practically infeasible," making it likely that international debate around pre-deployment safety verification frameworks will accelerate. The GCRI report similarly highlights uneven AI development speeds and emphasizes the need for systematic risk management.

Product and Engineering Organization Restructuring

AI-driven engineering acceleration is fundamentally reshaping where bottlenecks form in product organizations. As traditional specification-heavy PM work is being re-evaluated, more organizations are expected to experiment with new collaboration models and governance structures to keep pace with AI speed.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.