Today’s AI Model Benchmark Report — 2026-04-29

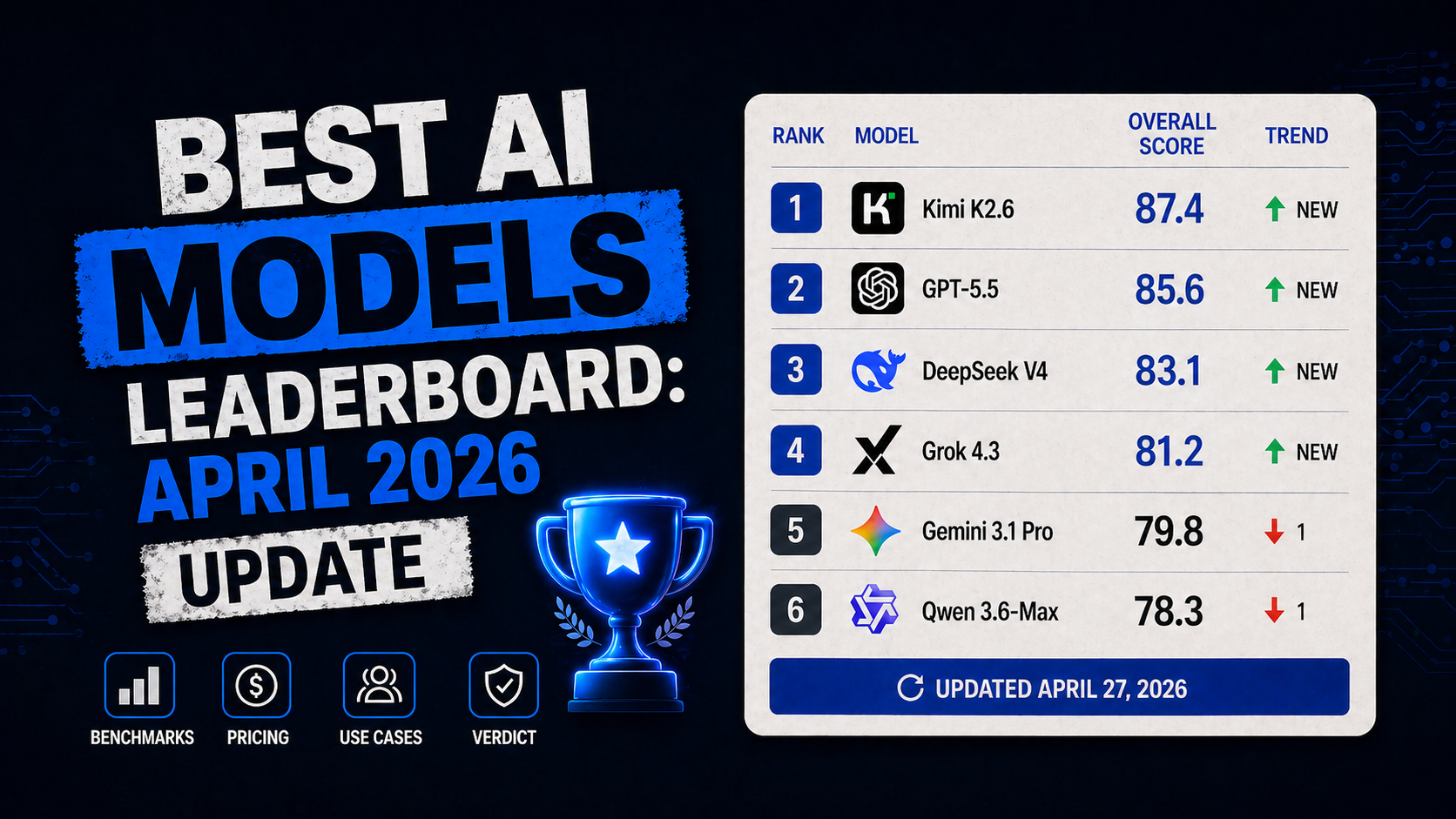

As of April 27, 2026, GPT-5.5 has topped major benchmarks, though it faces criticism for a 20% spike in API costs and persistent hallucination issues. Meanwhile, AIME 2026 has emerged as the new gold standard for math reasoning, where Qwen3.5-plus achieved an impressive 91.3%. According to the April 27 update from BuildFastWithAI, the market is heating up with the rapid, back-to-back releases of Kimi K2.6, GPT-5.5, DeepSeek V4, and Grok 4.3.

Today’s AI Model Benchmark Report — 2026-04-29

1. Chatbot Arena (LMSYS) Leaderboard Rankings

Real-time Elo score data from the LMSYS Chatbot Arena is currently unavailable. However, the AI model leaderboard updated by BuildFastWithAI as of April 27, 2026, presents the following standings:

| Model Name | Elo Score | Performance Change |

|---|---|---|

| GPT-5.5 | — | Returned to #1 in benchmarks |

| Kimi K2.6 | — | Recently released (within 5 days) |

| DeepSeek V4 | — | Recently released (within 5 days) |

| Grok 4.3 | — | Recently released (within 5 days) |

| Claude Opus 4.7 | — | Maintains high ranking |

| Gemini 3.1 Pro | — | Maintains high ranking |

Elo scores are marked as '—' as they could not be explicitly verified from the source.

2. Key Benchmark Model Analysis

① GPT-5.5 — Back on top, but burdened by costs and hallucinations

GPT-5.5 has reclaimed the #1 spot in major AI benchmarks, putting OpenAI back at the top. However, according to an analysis by the-decoder.com, the frequency of hallucinations remains high, and API costs have increased by 20% compared to previous versions. While analysts consider it the "best value for money" among proprietary models, the hallucination issue remains a significant limitation.

② Qwen3.5-plus — Achieving 91.3% on AIME 2026

In the field of mathematical reasoning, Qwen3.5-plus has reportedly scored 91.3% on the AIME 2026. Based on analysis from lxt.ai as of April 27, 2026, AIME 2025 and AIME 2026 have established themselves as the new standard benchmarks for frontier math evaluation, while GPT-5.3 Codex scored 96% on MATH-500. As GSM8K has become saturated, AIME 2026 is gaining attention as an indicator for assessing more complex, multi-step symbolic reasoning capabilities.

③ Kimi K2.6 / DeepSeek V4 / Grok 4.3 — The 5-day release race

According to the April 27 update from BuildFastWithAI, Kimi K2.6, DeepSeek V4, and Grok 4.3 were released in succession within just five days. The leaderboard is currently updating the benchmark scores, pricing, and recommended use cases for each model. This ultra-fast release competition is considered a hallmark of the 2026 AI frontier model market.

3. Benchmark Methodology and Additional Metrics

Changes in Math Reasoning Benchmarks: According to the latest lxt.ai analysis (April 27, 2026), the traditional GSM8K has become less discriminative due to saturation. MATH-500 offers better differentiation by requiring multi-step symbolic reasoning, and further, AIME 2025 and AIME 2026 have emerged as the current frontier standards.

IQ-based Evaluation: A report from Visual Capitalist on April 24 noted that TrackingAI released data ranking the "smartness" of 2026’s major chatbots and vision models using Norwegian Mensa IQ scores.

Android Bench: The Google Android developer team revealed an LLM evaluation methodology targeting the Android codebase. Unlike GitHub data, where 63% of repositories are applications, Android Bench focuses on libraries (58%), aiming to test an LLM’s ability to handle modularity and architectural patterns. The task complexity distribution consists of 46% small changes (under 27 lines), 33% medium changes (27–136 lines), and 21% large changes (over 136 lines).

4. Notable Performance Trends

GPT-5.5’s #1 Return and Cost Dilemma: While GPT-5.5 reclaimed the lead, the 20% API price hike and unresolved hallucination issues remain major risks for corporate adoption. This trade-off is a central issue in 2026 AI adoption discussions.

Ultra-fast Competition: The release of four major models (Kimi K2.6, GPT-5.5, DeepSeek V4, Grok 4.3) in just five days highlights the intense nature of the 2026 AI market. The BuildFastWithAI leaderboard continues to provide real-time updates to help users navigate model selection by price and purpose.

Sophistication of Math Benchmarks: The shift toward difficult evaluation metrics like AIME 2026 signals that industry standards are evolving to demand deeper reasoning capabilities beyond simple accuracy. The 91.3% score from Qwen3.5-plus is a key indicator that non-OpenAI models are rapidly advancing in mathematical reasoning.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.