Edge AI & IoT — 2026-04-24

Google dominated this week's edge AI headlines with dual announcements: the TPU 8th-generation launch for cloud inference and the production release of LiteRT-LM, an open-source on-device LLM runtime supporting Gemma, Llama, Phi-4, and Qwen across phones, MCUs, and IoT devices. On the connectivity front, Samsung SmartThings and IKEA achieved a hub-free Matter-over-Thread integration covering 25 devices, accelerating the practical rollout of the Matter standard.

Edge AI & IoT — 2026-04-24

New Silicon & Devices (at least 3)

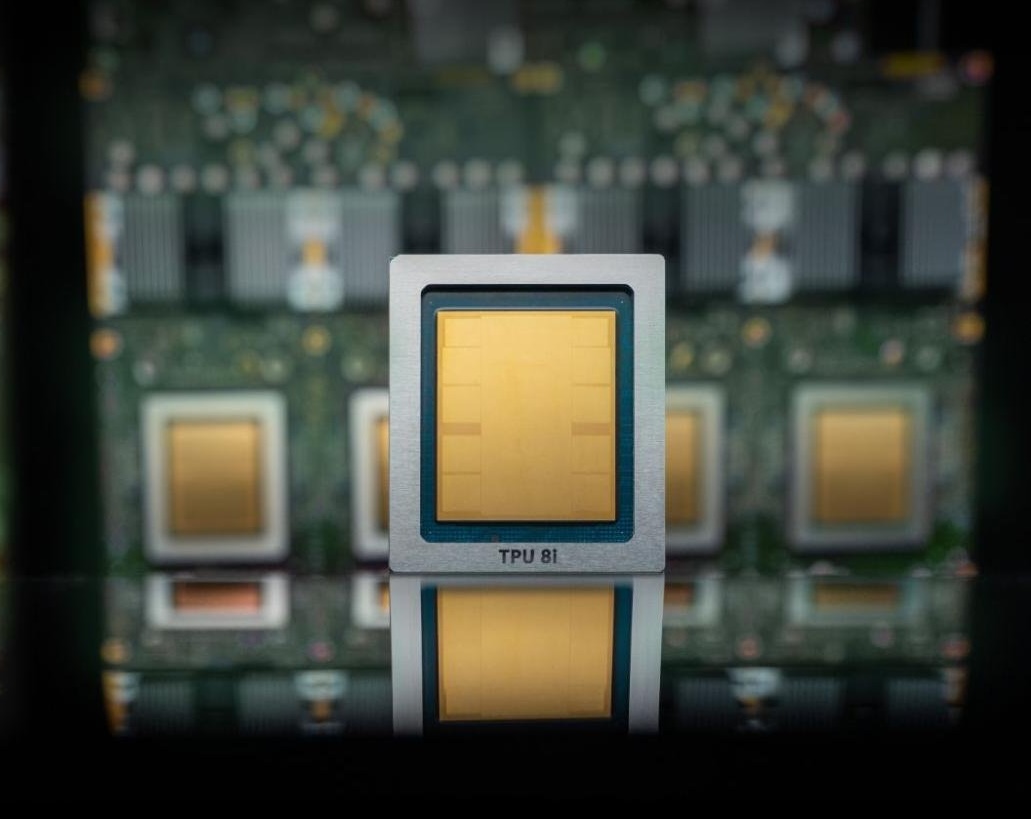

Google TPU v8 (Trillium + Ironside) — Google Cloud

- What it is: Eighth-generation dual-chip family for AI training (Trillium) and inference (Ironside), announced at Google Cloud Next 2026

- Headline specs: Massive SRAM integration for inference; faster than prior-gen TPUs; cloud-hosted; Google claims cheaper and faster than previous versions

- Target use case: Data-center AI inference and training; agentic-era workloads

- Why it matters: While primarily a cloud chip, Google's inference-optimized Ironside chip embodies the same low-latency philosophy driving edge NPU design. The dual-chip approach signals a clear fork between training silicon and inference silicon — a pattern now filtering down to edge SoCs.

Origin Evolution NPU IP — Expedera

- What it is: Winner of the 2026 Edge AI & Vision "Best Edge AI Processor IP" award; silicon IP block for embedding into SoCs

- Headline specs: Not publicly disclosed; designed for scalable integration into custom data-center and edge SoCs

- Target use case: Custom chip design for edge inference, embedded vision, and data-center inference acceleration

- Why it matters: Recognition at the industry's leading IP awards underscores that purpose-built NPU IP — rather than general-purpose GPU bolted on — is increasingly the design-of-choice for edge AI SoCs. Chip designers integrating this IP can ship faster time-to-market for edge devices.

Texas Instruments MCU NPU Integration — Texas Instruments

- What it is: Expanded MCU portfolio (MSPM0G5187 / AM13Ex family) integrating TinyEngine NPU blocks directly into microcontrollers

- Headline specs: Real-time control MCUs with on-chip NPU; targets wearable health monitors up to humanoid robots; uses TinyEngine inference library

- Target use case: Wearables, industrial motors, physical AI in robotics

- Why it matters: Moving NPU capability into cost-optimized MCUs at scale brings sub-dollar AI inference to billions of embedded endpoints. Combined with TI's software ecosystem, this lowers the barrier for OEMs shipping always-on keyword spotting, anomaly detection, and gesture recognition without a separate AI accelerator.

On-Device AI & Runtimes (at least 2)

LiteRT-LM — Google AI Edge

- Release: v1.0 production release, April 7–8, 2026; open-source (Apache 2.0); available on GitHub at

google-ai-edge/LiteRT-LM - Hardware targets: Android, iOS, Web, Desktop, IoT (Raspberry Pi); GPU and NPU acceleration; supports Gemma 4 E2B/E4B, Llama, Phi-4, Qwen, and more

- Benchmark / quality note: Delivers multi-modal (vision + audio) and function-calling support; Google describes it as a successor to TFLite delivering 1.4× faster GPU performance vs. the previous LiteRT baseline for LLM use cases

- Developer impact: Any developer shipping a local chatbot, on-device RAG pipeline, or agentic workflow now has a single cross-platform inference SDK. The CLI (

litert-lm run --from-huggingface-repo=...) makes pulling quantized models from HuggingFace and running them on edge hardware a one-liner — a meaningful DX improvement over stitching together ONNX Runtime + quantization tooling.

SemiEngineering: "Can Edge AI Keep Up?" — Architecture Analysis

- Release: Published April 23, 2026; editorial analysis, not a product release

- Hardware targets: Broad — ARM, RISC-V, custom NPU designs discussed

- Benchmark / quality note: Expert commentary explores whether silicon design cycles (2–3 years) can keep pace with model architecture innovation (sub-6-month cycles); highlights adaptability vs. raw TOPS efficiency tradeoffs

- Developer impact: Product leads evaluating edge AI silicon should read this before locking SoC selection. The key insight: chips optimized purely for transformer attention may already be misaligned with rapidly evolving hybrid SSM/attention architectures like Mamba or Gemma hybrids.

IoT Platforms & Standards (at least 2)

Matter + SmartThings × IKEA Direct Integration

- Update: Samsung SmartThings announced April 21, 2026 that 25 IKEA Matter-over-Thread devices now connect directly to SmartThings without any IKEA hub. Samsung TVs already embed Thread Border Routers, acting as the connectivity fabric. IKEA bulbs start at $5.99.

- Breaking / compatibility: No separate IKEA Dirigera hub required; existing SmartThings users with a Samsung TV (2022+) gain instant compatibility. IKEA's older Zigbee-based TRÅDFRI lineup is not covered.

- Ecosystem effect: This is one of the first mass-market, hub-free Matter-over-Thread deployments at scale. It validates the Matter architecture for budget smart home devices and puts pressure on Amazon (Alexa) and Apple (HomePod) to accelerate comparable direct Thread integrations. ZDNET and The Next Web both flagged it as a landmark interop milestone this week.

Matter vs Thread vs Zigbee — Ecosystem Reality Check (ZDNET)

- Update: ZDNET published an April 21, 2026 comparison piece concluding that Thread + Matter is the strategic forward direction, while Zigbee remains the most pragmatic choice for existing installations. The piece follows IKEA's public pivot away from Zigbee toward Matter-over-Thread (discussed above).

- Breaking / compatibility: Zigbee devices will not automatically become Matter-compatible; bridging hardware is required. Thread Border Routers (built into recent Apple HomePods, Samsung TVs, and Google Nest Hubs) are the key gating infrastructure.

- Ecosystem effect: This framing crystallizes the market split: enterprise/industrial IoT continues on Zigbee or proprietary LPWANs, while consumer smart home shifts toward Matter. Device vendors still supporting only Zigbee face increasing pressure to add Matter OTA or ship next-gen hardware with Thread radios.

Industry & Deployment Signals (at least 2)

-

Google AI Edge (LiteRT-LM launch): Google officially productionized its on-device LLM framework on April 7–8, 2026, signaling that the search giant is treating edge inference as a first-class deployment target alongside cloud. The framework's IoT-tier support (Raspberry Pi tested) indicates Google is actively targeting sub-$100 gateway hardware — not just flagship phones.

-

Edge AI chip market momentum: A new market report published April 23, 2026 cites the global AI chip market at USD 46.57 billion in 2025, with edge AI devices identified as one of the fastest-growing segments. The report notes that while data centers dominate current spend, edge AI device shipments are accelerating rapidly across automotive, healthcare, and consumer electronics verticals.

Community & Open Source (at least 2)

-

google-ai-edge/LiteRT-LM(GitHub): Released April 7–8, 2026; already trending with cross-platform LLM deployment examples including Raspberry Pi + NPU acceleration. The repo includes a CLI, Python bindings, and HuggingFace Hub integration for pulling pre-quantized LiteRT-LM model files. Traction is high given Google's brand weight and the fact it replaces fragmented TFLite + MediaPipe pipelines. -

Google AI Edge Gallery (experimental app): Companion showcase application for LiteRT-LM that demonstrates on-device generative AI running fully offline. Open to community contributors and serves as a living demo of vision, audio, and text LLM capabilities on consumer Android hardware — a useful reference architecture for embedded AI developers.

Analysis — Trends to Watch

-

Inference is eating the edge silicon roadmap. Google's TPU v8 Ironside chip, Expedera's award-winning NPU IP, and TI's MCU-integrated NPU all point to the same vector: the dominant design challenge has shifted from "can we fit a model?" to "can our silicon keep up with model architecture changes?" SemiEngineering's April 23 analysis explicitly names this as the critical tension for the next 18–24 months.

-

Matter is crossing the chasm — with Thread as the delivery vehicle. The Samsung × IKEA hub-free integration at the $5.99 price point is the kind of mainstream moment Matter has needed. When consumers can buy a smart bulb for six dollars and have it "just work" via a TV they already own, the addressable market expands by an order of magnitude. Watch for Amazon and Apple counter-moves in Q2–Q3 2026.

-

LiteRT-LM consolidates the on-device LLM fragmentation problem. Until this week, developers shipping local LLMs on edge hardware juggled ONNX Runtime, llama.cpp, MediaPipe LLM, and ExecuTorch. Google's unified cross-platform SDK with HuggingFace Hub integration removes a major integration burden. Expect the toolchain to cannibalize mindshare from independent runtimes within 6–12 months, especially for Android-first teams.

Reader Action Items

-

Evaluate LiteRT-LM for your next on-device LLM feature: If you are building Android, iOS, or Raspberry Pi applications, run

litert-lm run --from-huggingface-repo=litert-community/gemma-4-E2B-it-litert-lmagainst your current llama.cpp or ONNX stack and benchmark latency + binary size before your next sprint planning. -

Audit your smart home device roadmap for Thread Border Router support: If you are shipping a consumer IoT hub, TV, or router in H2 2026, verify whether your SoC already supports Thread. Samsung TVs (2022+) became default Thread Border Routers this week — that is now a competitive baseline. Zigbee-only devices without a Matter bridge story face a shrinking retail shelf-life.

-

Re-evaluate edge SoC selection against model lifecycle risk: SemiEngineering's April 23 analysis argues that chips optimized for today's transformer attention patterns may underperform on hybrid architectures arriving in 12–18 months. Before final SoC sign-off, confirm your NPU vendor has a software-updatable kernel pathway or sufficient programmable shader headroom for architectural diversity.

What to Watch Next

-

Google I/O 2026 (expected May): Full LiteRT-LM developer toolchain announcements, potential Gemma 4 on-device model optimizations, and Android 16 NPU API updates are likely to be demoed. Watch for expanded IoT-tier support beyond Raspberry Pi.

-

Matter 1.4 specification timeline: The Connectivity Standards Alliance has been working toward Matter 1.4, which is expected to add energy management and EV charging device types. A ratification announcement could come at any point in Q2 2026; watch the CSA mailing list and member announcements.

-

COMPUTEX 2026 (May 20–23, Taipei): MediaTek, Qualcomm, and AMD are all expected to preview next-generation edge AI SoCs with NPU upgrades. This is the most important silicon preview event between now and Hot Chips in August.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.