Legal Tech Digest — 2026-04-26

Anthropic and elite law firm Freshfields struck a landmark partnership to jointly develop AI legal tools, while a state appeals court issued a landmark ruling requiring lawyers to disclose AI-caused errors in filings. Meanwhile, Sullivan & Cromwell became the latest prestigious firm to apologize for AI "hallucinations" in a court filing — underscoring the fast-widening gap between AI adoption and accountability in the legal profession.

Top Stories

Anthropic Partners with Freshfields to Build AI Legal Tools

- What happened: Anthropic and global "magic circle" law firm Freshfields signed a formal agreement to jointly develop new AI tools for legal services. The deal gives Anthropic access to Freshfields' deep legal expertise to build products that can be sold to other law firms and legal teams.

- Why it matters: This is a significant inflection point — rather than law firms simply licensing AI platforms, we're now seeing AI labs treat top-tier law firms as co-development partners. The tools created through this deal could eventually reshape how competing firms operate, as Anthropic plans to sell them widely across the legal market.

- Key details: The Financial Times reported that the arrangement represents a push by Anthropic into the legal vertical. Freshfields will contribute domain expertise, effectively training Anthropic's models on real-world legal workflows — a strategy with major implications for legal AI accuracy and adoption.

Appeals Court: Lawyers Must Disclose AI-Caused Errors

- What happened: A state appeals court ruled this week that lawyers have an obligation to be candid with judges when generative AI tools produce errors in court filings — adding judicial weight to a growing body of guidance targeting AI misuse in the courtroom.

- Why it matters: This is a binding precedent, not just guidance. Lawyers who allow AI-generated errors to stand without disclosure now face direct legal and ethical consequences. The ruling signals that courts are moving past tolerance and toward enforcement.

- Key details: The ruling reinforces existing professional conduct duties around candor to the tribunal and specifically addresses the AI context. It comes amid a wave of similar judicial concern, with AI-related sanctions rising steadily. Court observers note this ruling may be cited in jurisdictions nationwide.

Sullivan & Cromwell Apologizes for AI Hallucinations in Federal Court Filing

- What happened: Premier Wall Street law firm Sullivan & Cromwell apologized to a federal judge after submitting a court filing containing inaccurate citations and other errors generated by artificial intelligence.

- Why it matters: Sullivan & Cromwell is among the most prestigious U.S. law firms — its AI stumble sends a stark signal that even the most well-resourced firms are vulnerable to AI hallucination risks if internal review processes aren't airtight. It also adds momentum to calls for mandatory AI disclosure rules.

- Key details: The firm acknowledged the errors were AI-generated. The apology came just days before the appeals court ruling requiring disclosure of AI errors — making this one of the most closely watched AI-in-court incidents of the month.

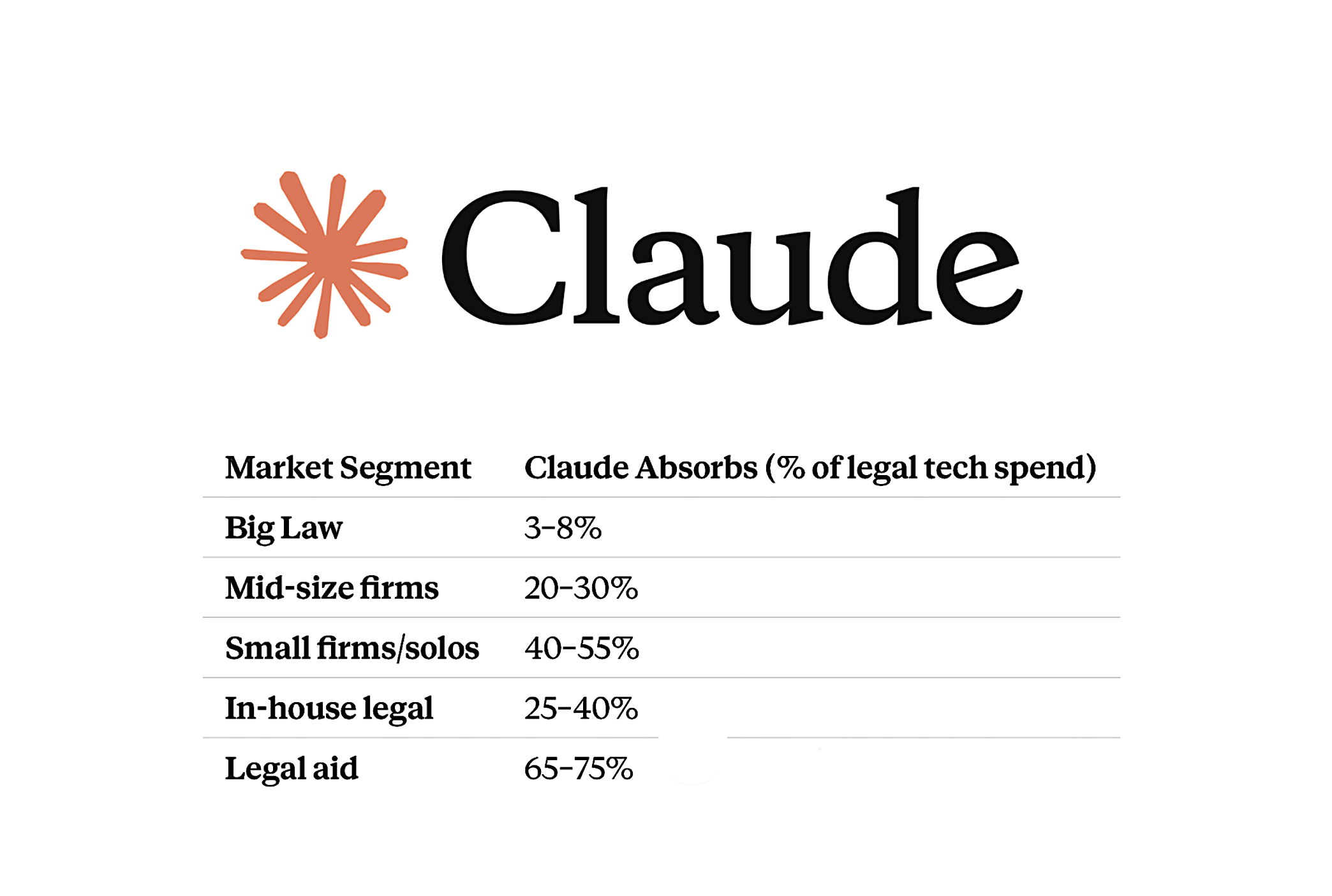

Claude Could Absorb Up to 40% of In-House Legal Tech Spend, Says Anthropic

- What happened: Anthropic's Claude AI system could absorb between 25% and 40% of in-house legal tech spend over the next three to five years, according to claims made by Anthropic itself — if legal teams adopt Claude's Word add-in and other custom tooling.

- Why it matters: The claim, reported by Artificial Lawyer, is a bold competitive statement that positions Claude as a horizontal platform, not just a research or drafting tool. It puts Claude in direct competition with established legal-specific vendors across contract management, research, and workflow automation.

- Key details: The projections hinge on adoption of Claude's Microsoft Word integration and customized enterprise deployments. Legal operations leaders should assess whether current vendor contracts align with these emerging consolidation dynamics.

Abstract Launches AI-Powered Legislative Intelligence for Law Firms

- What happened: New York-based legal tech startup Abstract has launched a platform offering AI-powered legislative and regulatory monitoring for law firms and corporate legal departments.

- Why it matters: Legislative tracking has historically been time-intensive and error-prone. Abstract's entry signals growing demand for real-time regulatory intelligence tools as companies navigate increasingly complex multi-jurisdictional environments.

- Key details: Abstract targets both law firm practice groups and in-house legal teams that need to stay current on rapidly shifting legislation. The tool aims to surface relevant regulatory developments automatically, reducing manual monitoring burdens.

New Tools & Product Launches

-

Abstract: New York-based startup offering AI-powered legislative and regulatory monitoring for law firms and corporate legal departments, designed to automate tracking of new laws and regulatory changes across jurisdictions.

-

Claude for Legal (Anthropic + Freshfields): A co-developed suite of AI legal tools being jointly built by Anthropic and Freshfields, slated to be commercialized for sale to other law firms and legal departments following the April 23 partnership announcement.

-

Claude Word Add-In (Legal Edition): Anthropic's Microsoft Word integration, cited as a core element of its projected ability to capture 25–40% of in-house legal tech spending over three to five years, enabling AI assistance directly inside familiar document workflows.

Courts & Regulation

-

U.S. State Appeals Court (ruling April 23): A state appeals court ruled that lawyers must affirmatively disclose to judges when generative AI causes errors in court filings, framing this as part of the existing duty of candor to the tribunal. The ruling is binding precedent and may be cited in other jurisdictions. Practitioners must now implement AI verification workflows or risk sanctions for silent submission of AI-generated errors.

-

DOJ + xAI vs. Colorado AI Antidiscrimination Law: The U.S. Department of Justice joined Elon Musk's xAI in a federal lawsuit seeking to block Colorado's AI antidiscrimination law, arguing it is unconstitutional and threatens U.S. AI leadership. The case has direct implications for legal tech vendors operating AI-assisted hiring, screening, or decisioning tools, as it could set a precedent limiting states' ability to regulate AI outcomes.

Industry Moves

-

Anthropic + Freshfields: The two organizations signed a formal co-development agreement to jointly build AI legal tools, with Anthropic planning to commercialize the resulting products for sale to other law firms and corporate legal departments. Terms were not disclosed.

-

Law.com / AI Business Model Analysis: Law.com's international edition published a deep-dive piece (April 24) examining why AI stalls inside law firms — pointing to lawyer psychology and internal adoption resistance rather than technology gaps as the primary barrier. The piece follows research from the Thomson Reuters Institute showing nearly half of legal departments now report department-wide AI adoption.

What to Watch Next Week

- Legal Innovators Europe (Paris, June 24–25): Now actively in the agenda-building phase, this conference is expected to draw significant attention to the Freshfields-Anthropic model and whether European law firms will follow with similar partnerships. Watch for pre-conference announcements from major EU firms.

- Colorado AI Antidiscrimination Law Proceedings: The DOJ/xAI federal court challenge is in early stages — any preliminary injunction motion or hearing dates will be closely watched by legal tech vendors whose products touch AI-assisted decisions in employment or other regulated domains.

- Continued AI Disclosure Fallout: Following the Sullivan & Cromwell apology (April 21) and the appeals court ruling (April 23), expect bar association ethics committees and additional courts to issue supplemental guidance or standing orders on AI use disclosures — possibly as early as next week.

Reader Action Items

- Audit your AI verification protocols now: The appeals court ruling requiring disclosure of AI errors means that "we used AI and didn't check" is no longer a defensible posture. Firms should immediately review whether their AI workflows include mandatory human verification before any filing is submitted to a court.

- Reassess your legal tech vendor stack: With Anthropic explicitly targeting 25–40% of in-house legal tech spend and the Freshfields partnership accelerating Claude's legal-domain capabilities, in-house legal ops teams should evaluate whether current contracts with point-solution vendors still offer competitive value against emerging integrated AI platforms.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.