X/Twitter AI Pulse — 2026-05-02

This week's AI discourse has been dominated by explosive funding news around Anthropic — reportedly in talks to raise at a staggering $900 billion valuation — alongside the Pentagon signing AI deals with major tech players while leaving Anthropic out. Meanwhile, debates about AI agents crossing capability thresholds, a lawsuit from families blaming OpenAI for a school shooting, and a dark-money campaign stoking anti-China AI fears have all kept X/Twitter buzzing.

X/Twitter AI Pulse — 2026-05-02

Top AI Discussions This Week

Pentagon Signs AI Deals With OpenAI, Google, Microsoft, Amazon — Leaves Out Anthropic

- Who's talking: AI industry observers, national security commentators, tech journalists

- What happened: The U.S. Department of Defense announced agreements with OpenAI, Google, Microsoft, and Amazon to bring AI tools into its most sensitive classified networks — notably excluding Anthropic from this round of contracts.

- Key takes: The move sparked debate about whether Anthropic's reputation for safety-focused, cautious AI development is a liability in defense contexts, or whether the exclusion is temporary. Some on X noted the irony of Anthropic receiving massive Google investment while being shut out of Pentagon deals.

- Why it matters: Defense AI contracts represent a massive and growing revenue stream. Being excluded signals a potential strategic disadvantage for Anthropic even as its commercial valuation soars.

OpenAI Cleared for AWS and Google Cloud; Enterprise AI Agents Hijacked by Hidden Web Commands

- Who's talking: AI security researchers, enterprise AI practitioners, developer communities

- What happened: According to NeuralBuddies' May 1, 2026 recap, OpenAI received clearance to operate on both AWS and Google Cloud infrastructure. Separately, researchers discovered that hidden web-page commands can hijack enterprise AI agents — a significant security vulnerability for businesses deploying autonomous agents.

- Key takes: The agent hijacking story generated significant alarm on X, with security researchers calling it a serious enterprise risk. One widely shared framing: "Your AI agent can be socially engineered by a webpage it visits." The OpenAI cloud clearance was seen as a competitive moat-builder.

- Why it matters: As agentic AI deployment accelerates across enterprises, the attack surface expands dramatically. This vulnerability could slow enterprise adoption or trigger a wave of AI security startups.

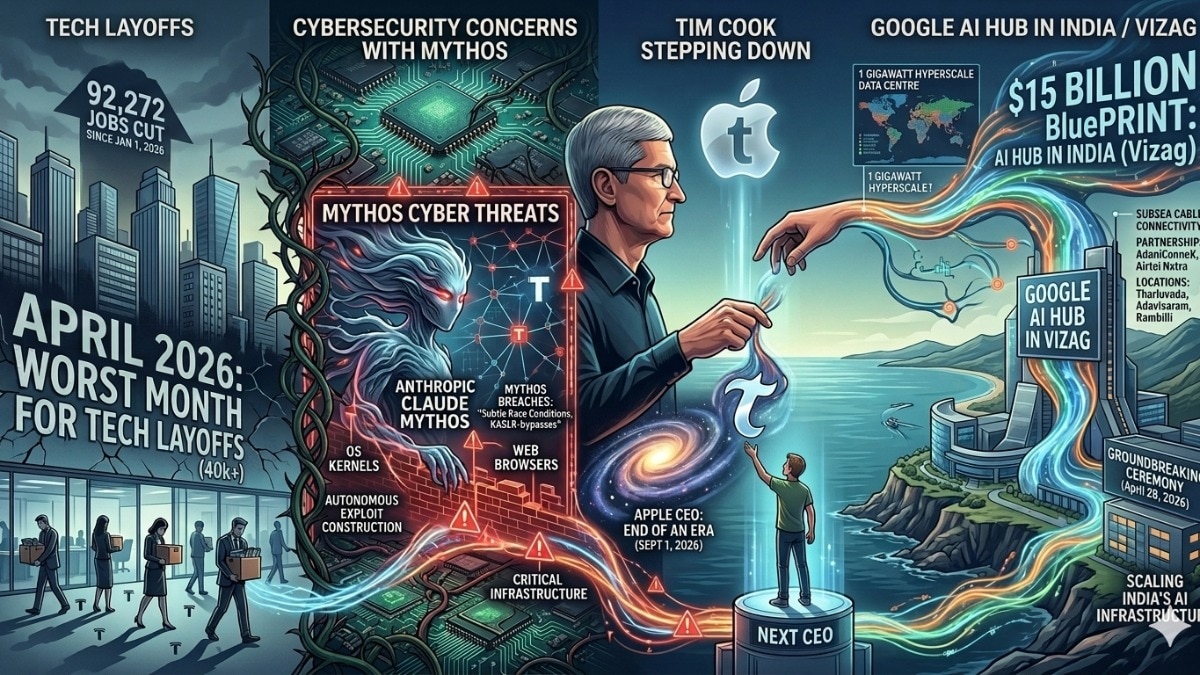

April 2026 Tech Recap: Layoffs, Leadership Shake-Ups, and AI Anxiety

- Who's talking: Tech industry analysts, business journalists, workforce commentators

- What happened: BusinessToday published a sweeping recap of April 2026, noting thousands of tech layoffs, rising concerns about powerful AI systems, and multiple leadership overhauls across major tech companies — all attributed to the accelerating pace of AI transformation.

- Key takes: The piece crystallized a growing X/Twitter narrative: AI is not just disrupting industries, it's destabilizing the very companies building it. Debates about whether AI-driven layoffs are temporary displacement or permanent structural unemployment ran hot.

- Why it matters: April 2026 may come to be seen as a turning point where AI's labor market impact became undeniable at the enterprise level, feeding both policy debates and public anxiety.

Hot Debates & Controversies

Dark-Money Campaign Paying Influencers to Fear-Monger About Chinese AI

- Side A: Build American AI — a nonprofit linked to a super PAC bankrolled by executives at OpenAI, Andreessen Horowitz, and Palantir — is funding TikTok influencers to spread pro-American AI messaging and stoke fears about China's AI capabilities. Proponents argue this is legitimate national interest advocacy.

- Side B: Critics, including Wired's investigative report, call this a "dark-money" influence campaign that uses fear and disinformation to shape public opinion and AI policy, rather than honest debate. Many on X expressed concern about tech billionaires funding political influence operations while claiming to champion open discourse.

- Current status: The story broke within the last 24 hours and is escalating rapidly. Calls for transparency about funding and messaging are growing louder, and the story is likely to attract Congressional attention given its intersection of AI, national security, and campaign finance.

Families Sue OpenAI Over School Shooting — AI Liability Debate Explodes

- Side A: Seven families have filed suit against OpenAI, alleging the company bears responsibility connected to a school shooting. This represents a new frontier in AI liability — holding AI companies accountable for downstream harms caused by their products.

- Side B: OpenAI and its defenders argue that holding an AI company liable for the actions of a user sets a dangerous precedent that would chill innovation, and that legal responsibility lies with individuals, not tool-makers.

- Current status: The lawsuit is newly filed and generating intense debate. Legal scholars on X are divided on whether existing product liability frameworks can apply to AI systems, and the case is being watched as a potential landmark.

Notable AI Announcements

-

Anthropic: Reportedly in talks with investors to raise a new $50B round at a valuation of $850–$900 billion — which would exceed OpenAI's valuation — with multiple preemptive offers received. Community reaction: Shock mixed with skepticism; some called it a bubble signal, others a rational bet on Claude's enterprise momentum.

-

Ineffable Intelligence (ex-DeepMind): A stealth AI startup founded by a former Google DeepMind researcher raised a record $1.1 billion in seed funding at a $5.1 billion valuation, backed by NVIDIA and Google, to pursue superintelligence research. Community reaction: Widely shared with a mix of awe and unease — "seed round, $1.1B, superintelligence goal" became a shorthand for how unusual this AI moment is.

-

Big Tech (Google, Amazon, Microsoft, Meta): Combined Q1 2026 capital expenditures on AI data centers exceeded $130 billion, with all four companies signaling even larger spend ahead. Community reaction: "AI spending sets a record, with no end in sight" (NYT) became one of the most-shared headlines; debate about whether this is rational investment or irrational exuberance dominated financial X.

Thought Leader Spotlight

@TheZvi on AI Capability Progress and the AGI Debate

- Key quote/insight: Zvi Mowshowitz pushed back hard on narratives that AI progress has "hit a wall," writing: "Look at GPT-5, look at what we had available in 2022, and tell me we 'hit a wall.'" He also called out a "subtle goalpost move" by AGI skeptics who reframe "AGI by 2027" to mean AGI in 2026 when predictions don't pan out.

- Context: The post was prompted by ongoing debate about whether scaling laws are exhausted and whether AGI timelines are realistic — a perennial X argument that has intensified following GPT-5's release and the rise of long-horizon coding agents.

- Community reaction: The thread sparked fierce debate between AI accelerationists and skeptics. Many agreed with Zvi's framing about goalpost-moving; others argued capability gains don't translate to AGI in any meaningful sense.

@gregisenberg on AI's Societal Inflection Points

- Key quote/insight: Greg Isenberg posted a viral thread of predictions keeping him up at night, including: "AI girlfriends/boyfriends will become a $50B market and nobody will talk about it publicly. But check the app store rankings at 2am." He also warned: "Someone will lose $20M+ because their AI agent got socially engineered by another AI agent."

- Context: The post was a broader meditation on how AI is reshaping human behavior, the economy, and social dynamics in ways that aren't being discussed in mainstream discourse — timed to coincide with the wave of agentic AI deployment news.

- Community reaction: The "AI agent socially engineered by another AI agent" prediction resonated especially strongly given the same week's news about hidden web commands hijacking enterprise agents. Many called it prescient.

What to Watch Next Week

- Anthropic funding round: Will the $900B valuation round close, and who are the lead investors? The answer will set a benchmark for the entire AI sector's valuation expectations heading into mid-2026.

- Pentagon AI expansion: Whether Anthropic is brought into subsequent DoD AI contracting rounds — or permanently sidelined — will signal how the U.S. government views safety-focused AI labs in national security contexts.

- AI agent security: Following this week's revelation about hidden web-page commands hijacking enterprise agents, expect a rapid response from major AI providers and likely the first formal security advisories or patches targeting agentic AI deployments.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.