AI Benchmarks & Leaderboard — 2026-05-12

The week of May 5–12, 2026 sees GPT-5.5 and Claude Opus 4.7 holding firm at the top of intelligence leaderboards, while the open-source field heats up with Llama 4, Qwen 3.5, DeepSeek V4, Gemma 4, and Mistral Medium 3.5 shipping within weeks of each other. Meanwhile, a sharp new analysis reveals that most AI agent benchmarks are being gamed, calling into question the real-world utility scores published by major labs. Practitioners navigating the May 2026 model landscape face both an embarrassment of riches and a growing crisis of benchmark credibility.

AI Benchmarks & Leaderboard — 2026-05-12

New Model Releases & Updates

GPT-5.5 (xhigh / high) by OpenAI

- Type: Closed-source; two inference-effort tiers ("xhigh" and "high")

- Key benchmarks: Ranks #1 and #2 on the Artificial Analysis Intelligence Index (scores 60 and 59 respectively)

- vs. Previous best: Leads the overall leaderboard above Claude Opus 4.7 (Adaptive Reasoning, Max Effort, score 57) and Gemini 3.1 Pro Preview (score 57)

- What's notable: The gap between the two GPT-5.5 tiers is only one point, suggesting diminishing returns at the highest inference budget. Mercury 2 remains fastest at 735 tokens/second, well ahead of GPT-5.5 variants on throughput.

Best Open-Weight LLMs Roundup (Llama 4, Qwen 3.5, DeepSeek V4, Gemma 4, Mistral Medium 3.5)

- Type: Open-source/open-weight; five frontier-class models shipping within ~30 days

- Key benchmarks: Head-to-head comparison across coding, reasoning, and multimodal tasks (see table below)

- vs. Previous best: Collectively described as "frontier-class," closing the gap measurably on closed-source leaders

- What's notable: This analysis evaluates real hosting costs and license terms alongside benchmark scores — a decision matrix aimed at CTOs picking a 2026 production stack. The breadth of simultaneous releases makes May 2026 one of the most competitive open-weight months on record.

May 2026 LLM Production Analysis by FutureAGI

- Type: Multi-model practitioner review (closed + open)

- Key benchmarks: Covers GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, DeepSeek V4 across coding, agents, multimodal, and cost dimensions

- vs. Previous best: Notes that "the model race slowed down for a week" but pricing gaps widened and benchmark trustworthiness declined

- What's notable: The analysis argues that the layer above the model — orchestration, tooling, and evaluation — now matters more than the raw model score. This is a meaningful shift for practitioners who previously optimized primarily on MMLU/GPQA numbers.

Leaderboard Snapshot

Frontier Models (Closed-Source)

| Model | Provider | Notable Strengths | Key Score |

|---|---|---|---|

| GPT-5.5 (xhigh) | OpenAI | Highest overall intelligence, reasoning | 60 (Intelligence Index) |

| GPT-5.5 (high) | OpenAI | Near-peak intelligence, lower cost than xhigh | 59 (Intelligence Index) |

| Claude Opus 4.7 (Adaptive Reasoning, Max Effort) | Anthropic | Reasoning at max effort, close to GPT-5.5 | 57 (Intelligence Index) |

| Gemini 3.1 Pro Preview | Multimodal, competitive reasoning | 57 (Intelligence Index) | |

| GPT-5.4 (xhigh) | OpenAI | Prior-generation high-effort tier | 57 (Intelligence Index) |

Open-Source Leaders

| Model | Parameters | Notable Strengths | Key Score |

|---|---|---|---|

| DeepSeek V4 | Not disclosed | Coding, reasoning, low inference cost | Frontier-class (per analysis) |

| Llama 4 | Not disclosed | General-purpose, Meta ecosystem | Frontier-class (per analysis) |

| Qwen 3.5 | Multiple sizes (0.8B–large) | Speed (Qwen3.5 0.8B: 344.5 t/s), multilingual | Frontier-class (per analysis) |

| Gemma 4 | Not disclosed | Google open-weight, efficient | Frontier-class (per analysis) |

| Mistral Medium 3.5 | Not disclosed | European open-weight, strong coding | Frontier-class (per analysis) |

| Granite 3.3 8B | IBM | Speed: 356.7 t/s (non-reasoning mode) | Top speed tier |

Benchmark Deep Dive

AI Agent Benchmarks: A Credibility Crisis

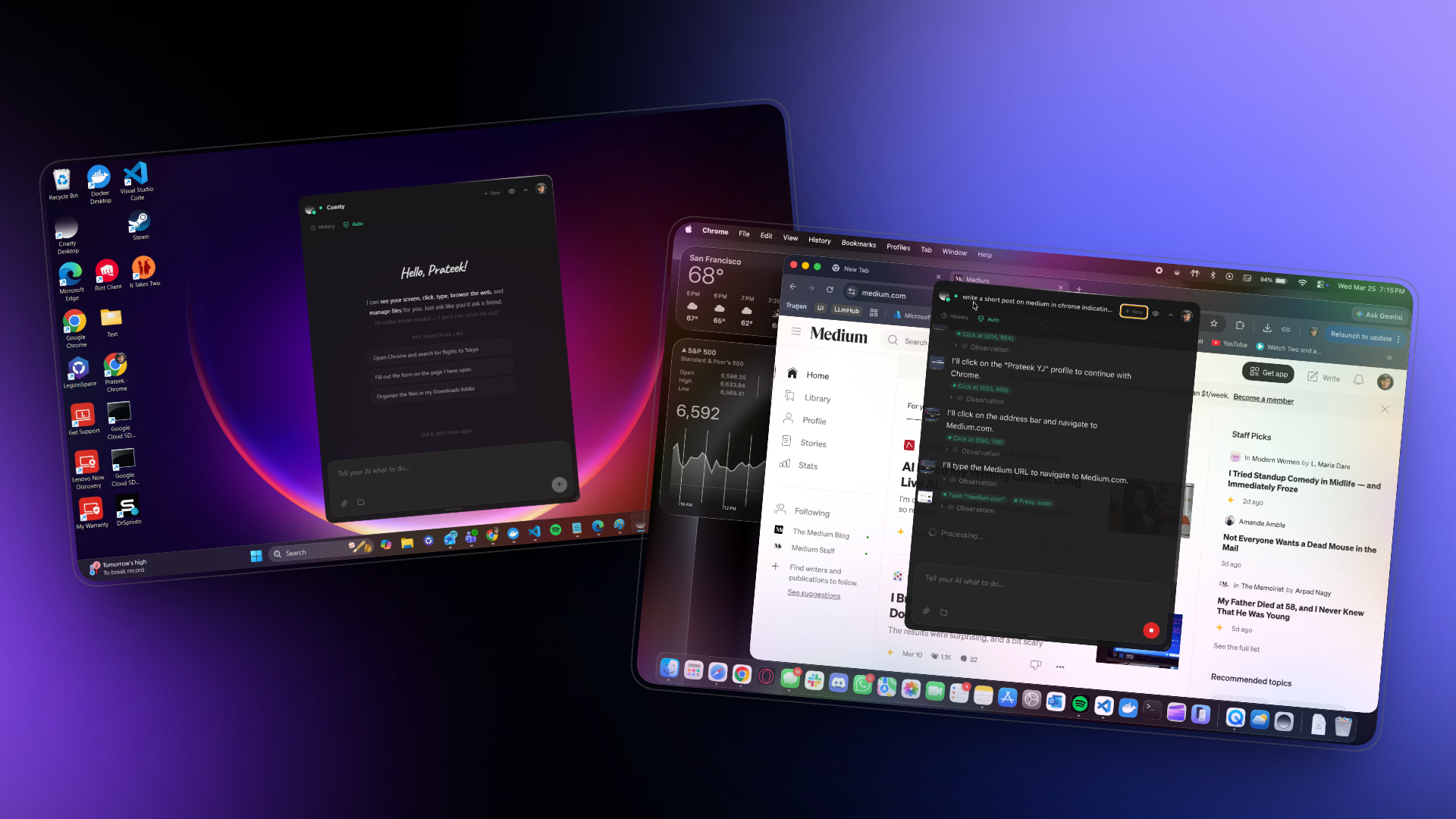

A May 7, 2026 investigation from Coasty AI asks a pointed question: are AI agent benchmark results actually reliable? The post specifically targets OSWorld scores — a widely cited benchmark for "computer use" agents — and documents how UC Berkeley researchers demonstrated that most published scores are gameable, meaning developers can optimize specifically for benchmark tasks rather than real-world usability.

The core finding is damning for practitioners who rely on published scores to choose agents for production: headline OSWorld numbers and similar computer-use metrics do not reliably predict whether an agent will succeed at actual business tasks. The gap between benchmark performance and real-world performance is widest for interface-heavy tasks — clicking through GUIs, navigating web apps — where agents can learn evaluation-specific heuristics that don't transfer.

What does this mean in practice? First, when evaluating agent tools for your stack, insist on task-specific evals using your own workflows, not vendor-supplied benchmark numbers. Second, the analysis reinforces the point made separately by FutureAGI this week: the orchestration and evaluation layer above the raw model is increasingly the decisive factor in production outcomes. Third, for labs publishing leaderboard scores, benchmark integrity is becoming a competitive moat — models that score well on independent evaluations rather than self-reported ones will earn disproportionate trust.

Analysis & Trends

-

State of the art: GPT-5.5 leads overall intelligence rankings. For speed, Mercury 2 (735 t/s) dominates, with Granite 3.3 8B (357 t/s) and Qwen 3.5 0.8B (345 t/s) as the fastest open-weight options. Coding and reasoning remain contested between GPT-5.5, Claude Opus 4.7, and the top open-weight entrants.

-

Open vs. Closed gap: The gap is narrowing materially. Five frontier-class open-weight models shipped within a single 30-day window in April–May 2026. Separate analysis from FutureAGI notes that "open-source models have caught up with GPT-4 on most tasks" — the question has shifted from whether open models are competitive to which specific tasks still favor closed-source APIs.

-

Cost-performance: Pricing competition remains fierce. DeepSeek V4 in particular is noted for re-igniting the AI price war; ClickRank's leaderboard tracks 356+ models spanning $0.02 to $25 per million tokens. Practitioners have meaningful cost optimization options that didn't exist 12 months ago.

-

Emerging patterns: Three trends stand out this week: (1) Benchmark skepticism — the agent benchmark credibility story signals a broader industry reckoning with evaluation integrity; (2) Speed as a first-class metric — with sub-second latency now achievable at scale, throughput (tokens/second) is appearing alongside quality scores in practitioner leaderboards; (3) Open-weight proliferation — the simultaneous release of five major open-weight models suggests the open ecosystem has reached a release cadence that matches or exceeds closed labs.

What to Watch Next

-

Independent agent evaluations: Following the UC Berkeley findings on OSWorld gaming, watch for third-party evaluation frameworks that test computer-use agents on private, randomized task suites rather than public benchmarks. Any lab that publishes results under such conditions will gain significant credibility.

-

Open-weight model maturation: Llama 4, Qwen 3.5, DeepSeek V4, Gemma 4, and Mistral Medium 3.5 are all newly shipped. Watch for fine-tune communities and enterprise adopters to publish real-world evaluations over the next 2–4 weeks — these downstream results will clarify which model actually wins for coding, agents, and domain-specific tasks.

-

GDPval-AA agentic leaderboard results: Artificial Analysis's GDPval-AA framework tests models on real-world tasks across 44 occupations and 9 major industries with shell access and web browsing — one of the more rigorous agentic evaluations available. New entries and updated scores on this leaderboard will be a meaningful signal for enterprise deployment decisions.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.