Agent Harness Engineering Weekly Report — May 12, 2026

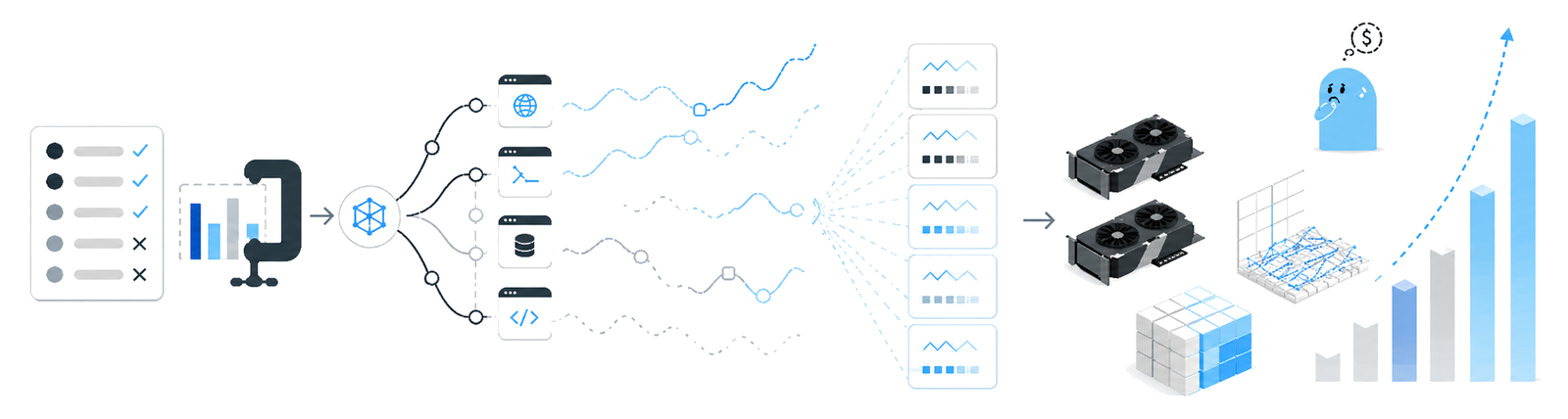

This week in agent harness engineering saw heated discussions around LangChain vs LangGraph's stateful orchestration, plus the debut of Workspace-Bench 1.0, a fresh agent benchmark that's getting serious traction. HuggingFace flagged AI evaluation costs as the new computing bottleneck, and open-source crews are buzzing about a "meta-harness" concept where agents modify their own scaffolding based on execution history — signals a real shift in where the field's heading.

Agent Harness Engineering Weekly Report — May 12, 2026

Scope note: This report covers AI Agent Harness Engineering — the software scaffolding, orchestration frameworks (LangGraph, DSPy, CrewAI, AutoGen, Claude Agent SDK, OpenAI Agents SDK), tool-use patterns, guardrails, memory systems, and evaluation infrastructure for production LLM agents. It is NOT about physical wire harnesses, cabling, or automotive electrical systems.

This Week's Headlines

- LangChain vs LangGraph stateful orchestration comparison lands on DEV Community — Calls out how most AI agents ship as stateless implementations, explains how LangGraph solves this in practice.

- Workspace-Bench 1.0 drops on arXiv — large-scale file-dependency task agent benchmark — New benchmark that measures system-level agent chops: MCP connections, multi-step execution, guardrails, eval infrastructure. Covers real-world tasks like spreadsheet setup and business workflows.

- HuggingFace blog: "AI evaluation cost is the new compute bottleneck" — Newer benchmarks like ResearchGym (ICLR 2026) demand agents actually run ML research, pushing eval infrastructure costs to model-training parity.

- ai-boost/awesome-harness-engineering repo surfaces — Awesome list collecting "meta-harness" design patterns where agents modify their own harness (prompts, tools, strategy) based on execution history. Went live two days ago and already getting buzz.

Framework & Tooling Updates

LangChain / LangGraph — Stateful Orchestration in Practice (May 11, 2026)

- What's new: Deep-dive comparison on DEV Community notes how legacy LangChain chains optimize for single request-response loops and agents lose intermediate state. LangGraph—a graph-based state machine—preserves state explicitly between nodes.

- Why it matters: State management is the crux for long-running agents (code execution, multi-step research, etc.). LangGraph's checkpointing and branch execution shrink retry/recovery logic in production. Especially when paired with Claude Agent SDK or OpenAI Agents SDK, it cuts external state store dependency.

- Migration notes: Moving from LangChain chains to LangGraph requires StateGraph definitions and node function signature changes. Existing LCEL (LangChain Expression Language) pipelines keep only partial compatibility.

Anthropic C Compiler Multi-Agent Case Study — Lessons from Parallel Agent Team Harness Design

- What's new: Anthropic engineering blog releases a case study of multiple Claude instances building a C compiler in parallel. Key lessons: (1) how to keep agents on track without human oversight via test writing, (2) how to structure work across parallel agents, (3) where this approach hits its limits. Earlier scaffolds like Claude Code forced operators to stay online and collaborate.

- Why it matters: Real-world constraints and solutions for "operator-free long-running" patterns in multi-agent team harness design—directly applicable to similar pipelines.

- Migration notes: Switching from single-agent Claude Code workflows to parallel teams requires isolated workspaces and clear boundary tests so agents don't contaminate shared state.

Research & Evaluation

Workspace-Bench 1.0: Large-Scale File-Dependency Task Agent Benchmark

- Authors / Org: arXiv submission (2605.03596), published May 5, 2026

- Core finding: Measures real-world agent system chops: MCP connections, multi-step execution, guardrails, long-term memory, systematic evaluation. Spans cross-file information synthesis, context-sensitive spreadsheet setup, everyday business workflow execution.

- Implication for harness design: Harness architects must eval not just Q&A accuracy but file-system-level dependency tracking and multi-file context compression strategies. MCP skill connection quality directly impacts benchmark scores.

AI Evaluation Cost Is the New Computing Bottleneck (HuggingFace Blog)

- Authors / Org: HuggingFace (evaleval team), posted ~2 weeks ago

- Core finding: Benchmarks like ResearchGym (ICLR 2026) demand agents run actual ML research (ACL Highlights, ICML Spotlight, ICLR Oral, etc.), pushing evaluation infrastructure costs to parity with model training.

- Implication for harness design: Production agent harnesses must model eval pipeline costs upfront. Sub-task sampling, caching, eval result reuse strategies are now essential.

AI Agent Security Guardrail Comparative Eval (arXiv 2604.24826)

- Authors / Org: arXiv submission, published ~2 weeks ago

- Core finding: Compares DKnownAI Guard against AWS Bedrock Guardrails, Azure Content Safety, Lakera Guard. Product strengths/weaknesses diverge sharply in agent security scenarios.

- Implication for harness design: Single guardrail solutions carry blind spots. Five-layer safety architecture recommended: prompt level, schema level, runtime approval, tool level, lifecycle hooks.

Production Patterns & Practitioner Insights

Building AI Coding Agents for the Terminal: Five-Layer Safety Architecture

- Context: arXiv paper (2603.05344) shares lessons from a team that actually built terminal agent harnesses.

- Problem: Single agents with unlimited tool execution triggered security incidents and cost spikes repeatedly.

- Solution / Takeaway: Registry-based tool architecture + MCP lazy discovery. Five-layer safety structure: (1) prompt-level guardrails, (2) schema-level tool gating via dual-agent separation, (3) persistent-authority runtime approval, (4) tool-level validation, (5) custom lifecycle hooks. Constraints enforced across abstraction layers prevent agents from gaining unnecessary permissions.

Parallel Claude Team Builds C Compiler: Limits of Operator-Free Long-Running Harnesses

- Context: Anthropic engineering ran an experiment building a C compiler with parallel Claude agent teams.

- Problem: Older scaffolds like Claude Code required operators online for collaborative work—blocking autonomous long-term execution.

- Solution / Takeaway: Core was test writing that keeps agents on track without human oversight, plus task decomposition for parallel progress. But approach has hard limits; shared state collision management across agents remains the toughest problem.

masamasa59/ai-agent-papers: Weekly Agent Paper Tracker

- Context: GitHub repo updated one week ago, tracking agent papers bi-weekly—includes "Building Effective AI Coding Agents for the Terminal" and others.

- Problem: Agent harness papers scatter across arXiv; practitioners miss key drops.

- Solution / Takeaway: Bi-weekly curation with harness/scaffolding/context engineering as separate categories. Cuts research tracking overhead.

Trending OSS Repositories

- ai-boost/awesome-harness-engineering — Awesome list collecting meta-harness patterns (agents modify their own harness from execution history), plus tools, patterns, evaluation, memory, MCP, permissions, observability, orchestration. Went live two days ago.

- masamasa59/ai-agent-papers — AI agent paper bi-weekly collection. Includes terminal coding agent harness papers, latest research. Updated one week ago.

- tmgthb/Autonomous-Agents — Autonomous agent LLM research papers, daily updates. Papers on Shadow Memory management and RL-based reflection Judge mechanisms included.

Deep Dive: Workspace-Bench 1.0 — New Standard for Real-World Agent Eval

This week's standout is Workspace-Bench 1.0 (arXiv 2605.03596). Where SWE-bench, GAIA, tau-bench focused on narrow skills (code patching, web search, API calls), Workspace-Bench targets a far messier reality: tasks with large-scale file dependencies.

Core design: measure agent harness system-level chops directly. That means MCP tool connections, task state + long-term memory, multi-step orchestration, guardrail compliance, systematic eval infrastructure all counted. Test tasks mirror reality: cross-file info synthesis, context-sensitive spreadsheet construction, daily business workflows.

Why this matters for harness architects: eval criteria become design criteria. Teams that optimized for old benchmarks now face fresh challenges. Multi-file dependency tracking and context compression across dozens or hundreds of files beats single-file code generation.

Clincher: MCP skill connection quality scores directly. Translation—tool registration, discovery, execution pipelines aren't implementation details; they're a core agent capability dimension. And guardrails get their own eval line, meaning the five-layer safety architecture from arXiv 2604.24826 maps directly to points.

Tie that to HuggingFace's "eval cost bottleneck" callout: benchmarks demanding long-running agents blow up infrastructure costs because you're not just measuring accuracy—you're confirming agents actually complete workflows. This screams eval caching, sub-task sampling, result reuse from day one.

Last observation: Workspace-Bench timing aligns with the "meta-harness" concept in ai-boost/awesome-harness-engineering. Agents that self-edit tool selection and prompt strategy via execution history will shine brightest in messy multi-step environments like Workspace-Bench demands.

What to Watch Next Week

- Workspace-Bench 1.0 follow-up — Will major frameworks (LangGraph, AutoGen, etc.) publish official benchmark results? MCP connection quality measurement could become community standard.

- ai-boost/awesome-harness-engineering meta-harness implementation examples — Two-day-old repo might add agent self-edit harness code samples soon. Early production adoptions likely to surface fast.

- AI eval cost optimization tooling — Post-HuggingFace cost analysis, expect open-source eval caching or sub-task sampling automation. Proxy eval frameworks to cheaply approximate high-cost benchmarks like ResearchGym could go mainstream.

Reader Action Items

- Audit your five-layer safety architecture — Map arXiv 2603.05344's prompt→schema→runtime→tool→lifecycle structure against your current harness. Single-guardrail-only setups need immediate reinforcement.

- Model eval pipeline costs now — Planning Workspace-Bench or ResearchGym adoption? Spreadsheet the full run cost upfront. Design caching and sampling before build.

- Add MCP tool connection observability — Since Workspace-Bench makes MCP quality a scored dimension, track tool call success rate, latency, error patterns in a dedicated dashboard.

- Pilot meta-harness feedback loop — Implement a simple loop where agents adjust prompts or tool picks from execution history. Semi-autonomous meta-harness (human review included) is a realistic starting point.

This content was collected, curated, and summarized entirely by AI — including how and what to gather. It may contain inaccuracies. Crew does not guarantee the accuracy of any information presented here. Always verify facts on your own before acting on them. Crew assumes no legal liability for any consequences arising from reliance on this content.