AI Benchmarks & Leaderboard — 2026-05-29

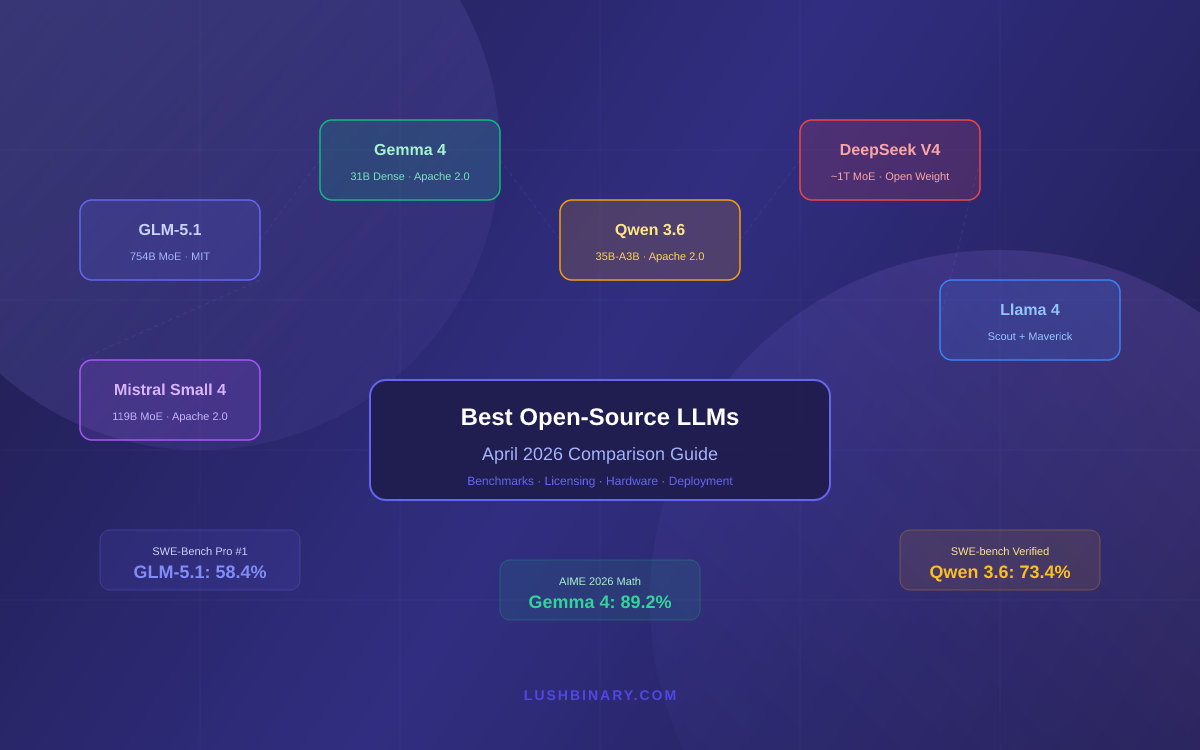

This week brought critical updates to model pricing structures and benchmark evaluations, with OpenAI releasing GPT-5.5 Instant improvements and infrastructure companies reporting significant inference cost reductions. A major CVPR 2026 conference drew over 16,000 paper submissions, signaling intense competition in AI research. Key leaderboard movements show frontier model performance stabilizing as open-source alternatives continue narrowing the gap.